Most research leaders don’t realize half their new “team” doesn’t have a CV.

Not long ago, leadership in research meant building large teams:

→ clinicians, data analysts, statisticians, coders, writers, project managers, regulatory experts, and lab scientists.

Each role had clear boundaries.

But that model is getting harder to sustain.

With a shoestring research budget—and recent cuts tightening the margin—I was forced to rethink how to keep projects moving.

I didn’t have the funds to build out a full team, but the demands of clinical research weren’t slowing down.

That pressure pushed me to experiment, refine, and eventually commit to a different model of leadership: one where people and AI agents work side by side.

You may not have the skills to do 10 jobs at once.

But with AI, you suddenly can – at least at the 60–70th percentile.

Enough to design a figure, check code, draft a budget, or summarize a dataset.

So what’s the leader’s role now?

To become a manager of AI agents.

(besides people).

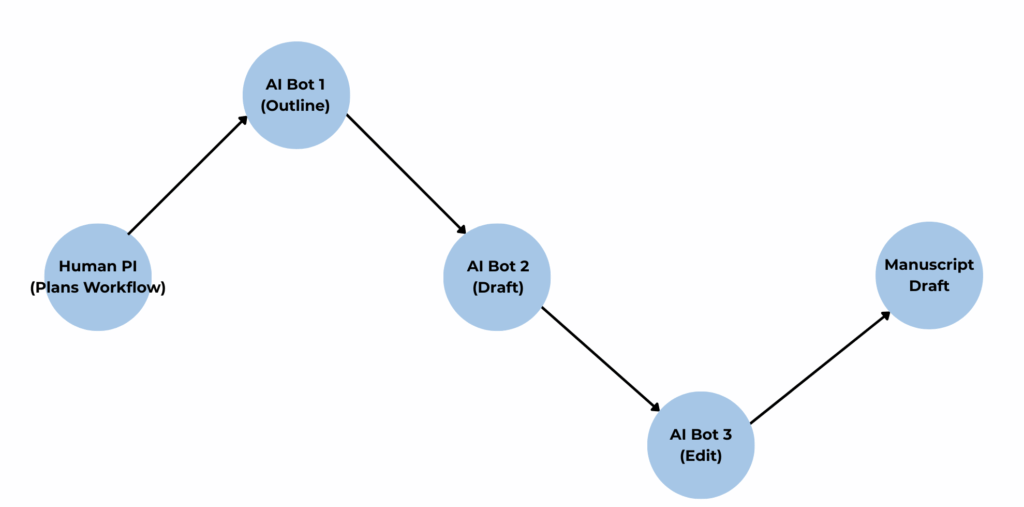

What do we mean by “agents”?

Think of agents as AI systems with varying levels of autonomy.

- Level 1 — Autonomy of Action. Often called agentic workflows.

Example: a manuscript-writing pipeline where one AI creates the outline, another drafts, and a third edits. Humans still set the plan; AI just executes.

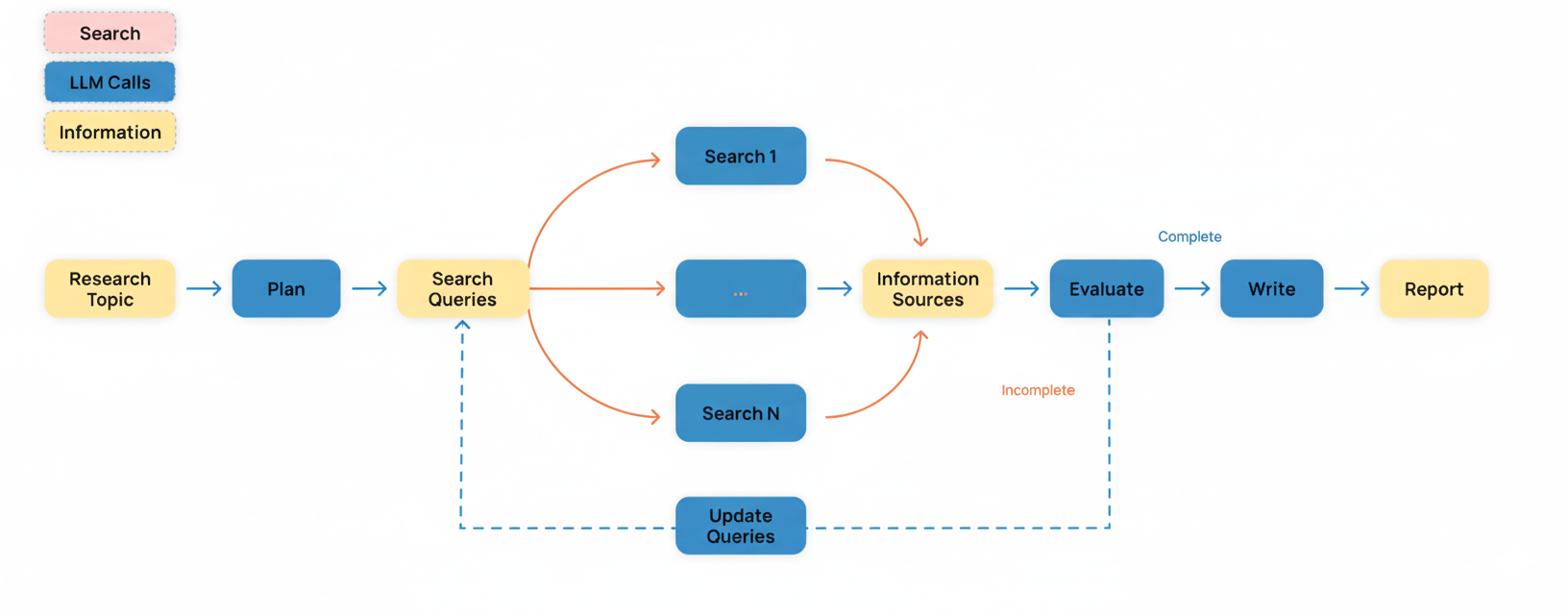

- Level 2 — Autonomy of Planning. These agents can break down goals and decide how to act. A “deep research agent” is a good example: you give it a question, and it designs the search strategy and evidence synthesis.

- Level 3 — Autonomy of Models. Here, agents can choose or swap models themselves. We aren’t there yet in terms of agent architecture.

For most of our work in research today, we’re in Level 1 territory—we do the planning, and AI handles the work. That’s what this post focuses on.

Managing Hybrid Teams

Think of your role in 3 layers:

- Vision (North Star).

- You still define the research question, the outcome that matters, and the ethical guardrails.

- Resources (People + Models).

- Yesterday’s resources were staff.

- Today, they’re also AI agents – each with strengths, weaknesses, and “personalities.”

- Your job is to assemble the right mix.

- Process (Coordination).

- You must design the workflow so humans and AI outputs complement each other

- Not compete, confuse, or dilute rigor.

The timeless job of a manager hasn’t gone away. It’s just been extended.

In clinical research, this shift is profound.

Leaders are no longer just mentors of junior colleagues.

We are architects of hybrid teams: humans and AI, aligned to produce reliable, ethical science.

The question is no longer, “Do we need managers?”

It’s: Can you manage in a world where half your team doesn’t have a career path, emotions, or a CV – but still shapes your results?

5 strategies on how to lead your research team in the Age of Hybrid Teams (People + AI):

1. Define the research agenda — Cyborg mode (blended back-and-forth)

- Tie every AI task to your “job to be done”: what decision will this output inform (study design, analysis, writing)? If it doesn’t change a decision, it’s noise.

- Clarify success metrics up front (e.g., draft quality, time saved, error rate) and keep a human in the loop for judgment calls.

- Invite AI deliberately, not blindly: use it wherever legal/ethical barriers allow, then learn its strengths/limits on your problems.

- Protect patients and your team: never input PII/PHI into consumer models; default to enterprise or local approved solutions.

Example — Studying Early Myocardial Infarction (<45):

- North Star: “Identify modifiable risk profiles for first MI <45 to inform targeted prevention in primary care.”

- Decision this work will inform: Build a clinic checklist + referral criteria for high-risk 20–44-year-olds.

- Success metrics (set upfront):

- Time: first full draft in ≤14 days.

- Quality: ≥90% references verified to primary sources; reproducible codebook + R script.

- Impact: at least one clear practice implication for PCPs.

- Cyborg brainstorm (you + LLM):

- You: list 5 plausible mechanisms (familial hypercholesterolemia, Lp(a), stimulant use, autoimmune disease, pregnancy-related HTN).

- AI (chat back-and-forth): pressure-tests each mechanism; suggests data elements you likely have (problem lists, meds, labs) vs. those you’ll need (Lp(a), genetic markers).

- Micro-prompt you can paste:

PERSONA(l): “Act as a cardiology clinical researcher.” GOAL: “Pressure-test my project’s North Star & success metrics for early MI <45.” OUTPUT: “List gaps, required variables, feasibility risks, and a 1-sentence impact statement.” AVOID: “No generic advice.” LENS: “EHR cohort at an academic center; ICD + labs available; genetics partial.”

2. Assign the right role — Name your “agents” + validators

- Match tasks to AI’s “jagged frontier”. That means leaning on AI where it’s consistently strong—brainstorming ideas, summarizing long texts, restructuring drafts, or scaffolding R code. But know its weak spots. It stumbles on math-heavy edge cases, nuanced statistics, or interpreting messy clinical data.

- Create named Level 1 agents with scopes: Evidence Summarizer, Code Reviewer, Figure Draftsman, Methods Stylist. Don’t overload one agent. (Quality improves when roles are narrow.)

- Specify guardrails in the prompt (clear context, what to avoid, desired output format, token limits) and record who validates each agent’s output.

- For any data-touched role, confirm privacy settings (model training off) or use institutionally approved models. If you are working with identifiable patient information, you will need to use your institutional AI models or run local models on your computer.

Example (same as above contd…)— Studying Early Myocardial Infarction (<45):

- People (owners):

- You (PI): final judgment on question, analysis choices, message.

- Statistician: model diagnostics + sensitivity analyses.

- AI agents (all Level 1):

- Evidence Summarizer: builds a live evidence grid (agree/contradict/neutral) on risk factors in young MI.

- Codelist Scout: proposes ICD/CPT/med list candidates; flags look-alikes (e.g., “chest pain, unspecified”).

- R Scaffolder: drafts tidyverse pipelines, table shells, and ggplot skeletons.

- Methods Stylist: converts your notes into clear, STROBE-aligned prose.

- Validation plan (who checks what):

- Codelists → clinician review of 50 charts.

- Evidence grid → you verify PDFs/DOIs for every claim.

- R code → statistician reruns and spot-checks on sample.

- Note on tools: R support is strongest; Stata help has improved but keep prompts tighter and provide a toy dataset for the model to mimic.

3. Design the workflow — Keep your current flow; add clean handoffs

- Keep your existing flow (if working well); insert AI where it removes friction: literature scan → AI evidence grid → your synthesis → AI draft → your revision → final checks. (Human checkpoints at each arrow.)

- Add observability: log prompts, versions, and sources; “test, test, test” with small pilots before scaling. Collect metadata on outputs and known failure modes.

- Standardize outputs (tables/JSON) so handoffs are clean across agents and people; require source provenance for every claim.

- Ensure a human reviewer signs off on sensitive steps and resolves ambiguities (AI augments; it doesn’t own final judgment).

Example (same as above contd…)— Studying Early Myocardial Infarction (<45):

Flow: Literature scan → AI Evidence Grid → Your synthesis → AI outline → Your edits → AI first draft → Your expert rewrite → Submission package

- Handoff artifacts (what each step outputs):

- Evidence grid (CSV/JSON): study, N, design, effect sizes, population age band, agree/contradict.

- Analysis plan (1 page): primary/secondary outcomes, covariates, DAG sketch, planned sensitivity analyses.

- Table shells: Table 1 (baseline), Table 2 (multivariable ORs/HRs), Figure 1 (age-by-risk interaction).

- Micro-prompt (analysis design, Cyborg):

- Reality check: you run the code locally; AI drafts and explains. Never let an agent auto-query your production data.

“Given MI <45 cohort and controls 20–44, propose 3 model specs (logistic, time-to-event, and causal adjustment set). Return: covariate list, coding, and how to test non-linearity. Flag likely confounders and mediators.”

4. Audit outputs for rigor — Treat Level 1 AI agents like a junior analyst with you as the supervisor

- Run a “junior-analyst Quality Control” on AI just like you would on a trainee: check references, re-compute numbers, and replicate key steps.

- Follow field-standard citation practice: limit background to the most relevant sources; place comparisons in the Discussion; verify to originals.

- Governance matters: document model choice, testing, and consent/notification where applicable; ensure equity and harm-avoidance reviews.

- Prefer explainable, trial-validated tools for clinical decisions; lack of transparency undermines scientific value and trust.

Example (same as above contd…)— Studying Early Myocardial Infarction (<45):

- Evidence QC:

- Verify all citations (about 20 to 25% have some hallucinations, esp. pertaining to author names); open PDFs; verify the exact numbers the AI quoted (CI, units, population). Replace anything “close but off.”

- Label each citation: primary vs. review; discard grey literature for key claims.

- Code QC (R focus):

- Recompute 1–2 results with a different function or approach (e.g.,

glm()vs.tidymodels). - Sensitivity set: remove smokers; stratify by sex; exclude FH phenotype; repeat models.

- Replicability: push to a versioned repo with

renv::snapshot(), lock file, and a knit-to-HTML report.

- Recompute 1–2 results with a different function or approach (e.g.,

- Writing QC:

- Ask AI to propose 3 alternative causal stories from the same results; you select the one consistent with data + prior.

- Require provenance: every statistic in the Discussion traces to a result or citation.

- Micro-prompt (QC pack):

“Generate a QC checklist for my early-MI analysis & draft. Sections: data validity, model diagnostics, sensitivity analyses, citation verification, reproducibility artifacts. Keep to 20 checks.”

5. Adapt to rapid change — Update models, not your standards

- Assume last quarter’s limits may be obsolete; re-evaluate agent scopes and swap models as capabilities shift—without loosening your guardrails.

- Keep an ethical/risk framework steady (accuracy, safety, bias mitigation, sustainability) while updating concrete practices.

- Schedule periodic “red team” tests and refresh training/policies for staff so your human-in-the-loop stays sharp.

- Track explainability and real-world performance; when models gain clarity or pass trials, expand use—when they regress, roll back.

Example (same as above contd…)— Studying Early Myocardial Infarction (<45):

- Quarterly review (30 min):

- Swap in the latest LLM for R Scaffolder if tests show fewer code errors.

- Keep your guardrails static: PHI handling, citation verification, human sign-off, reproducibility docs.

- Red-team drill:

- Give the agent a deliberately ambiguous question (e.g., “Is cannabis protective in young MI?”). Ensure it exposes uncertainty and refuses overclaiming.

- Roll-forward/rollback rule:

- If a model regresses (hallucinated refs, worse code), rollback to the previous one for critical steps.

- Journal fit refresher:

- Have AI re-map your paper to 2 alternative journals’ scope & formatting—you make the final call.

Putting all together, this is how a hybrid workflow may look like:

Walkthrough: From idea → draft on “Early MI (<45)” (Cyborg → Centaur)

A) Brainstorm/refine the idea (Cyborg)

- You: “Hypothesis: autoimmune disease + pregnancy HTN history + Lp(a) elevate MI risk <45 beyond LDL alone.”

- AI: returns variable list + feasible proxies (e.g., preeclampsia codes if pregnancy HTN missing), suggests DAG.

- Output: 1-page concept note + variable map.

B) Study design (Cyborg)

- You draft inclusion/exclusion, primary outcome (first MI), comparators, covariates.

- AI checks against STROBE; generates table shells and a power back-of-envelope.

- You finalize.

C) Analysis design (Cyborg → Centaur boundary)

- AI drafts R pipelines for cohort construction, missingness report, model specs, figure skeletons.

- You decide final model, interactions (e.g., sex×FH), and sensitivity analyses.

- Note: if using Stata or SAS, give smaller chunks (do-files by section); quality is “catching up,” so expect more manual edits.

D) Run analysis (Level 1 agent-assisted, you in control)

- AI proposes code; you run locally, inspect residuals, and adjust.

- Outputs: HTML QC report, renv lockfile, results tables.

- Reproducibility and explainability are key here.

- Suggested workflow: Run your analysis → knit

qc_report.html→ callrenv::snapshot()→ commitqc_report.html,renv.lock, and your code to the repo. - This makes sure your results are documented and your environment is frozen, so a reviewer/colleague/statistician can restore and re-run exactly what you did.

- Suggested workflow: Run your analysis → knit

E) Writing (Centaur — clear division of labor)

- You (human first): pick 2–3 key findings (e.g., “FH phenotype OR 3.2; pregnancy HTN history OR 1.8; Lp(a) high OR 2.1 independent of LDL”), craft core message and implications for PCP triage.

- AI: compiles an evidence table, drafts outline and then first full draft (Intro/Methods templated, Results from your tables, Discussion scaffolded by your message).

- You: rewrite claims, integrate field nuance, trim overreach.

- AI: handles cover letter, journal formatting, and keyword/abstract variants for two backup journals.

F) Peer review & revision (Cyborg)

- You: decide what to accept/clarify/contest; draft point-by-point reasoning.

- AI: rewrites responses in reviewer-friendly tone, pulls exact line references, generates tracked-changes boilerplate.

- You: final voice and science check.

G) Submission logistics (Human-led)

- Avoid agentic auto-submission for now (security & compliance concerns). You click submit.

Quick role cheat-sheet for your team

- You (PI): question, causal story, final judgment, key findings & implications.

- Statistician: diagnostics, sensitivity, interpretation guardrails.

- AI agents (Level 1): evidence grid, code scaffolding (esp. R), formatting, outlines, first drafts, tone refactoring, cover letter.

- Always human-owned: core message, novel interpretation, ethical decisions, submission.

The real risk isn’t AI replacing scientists.

It’s research leaders failing to manage AI agents sitting beside them.

And here’s the part worth remembering:

For many of us, this shift isn’t a luxury. It’s survival.

Limited budgets and lean resources mean we can’t just keep adding more staff to solve every problem.

Managing hybrid teams—humans plus AI agents—isn’t about chasing efficiency for its own sake.

It’s about keeping the science moving forward when traditional models of staffing no longer fit.

💬 How are you rethinking your role as a research leader in this new era – mentor, manager, or AI orchestrator?

PROMPT OF THE WEEK

Data exploration AI prompt: Understand, analyze, and visualize spreadsheets

You are an experienced data analyst and exploratory data analysis coach.

Your job:

First, ask me to paste or upload my dataset (CSV, Excel, JSON, etc.).

Once I provide it, perform a structured data exploration:

Data Overview: Show number of rows, columns, list of key columns with data types, and summarize what the dataset seems to represent.

Data Quality Check: Identify missing values, duplicates, inconsistent formats, or anything unusual.

Early Exploration: Provide simple descriptive statistics (mean, median, min, max, counts) and highlight interesting distributions or outliers.

Visualize Insights: Suggest (or generate, if possible) appropriate charts or graphs (bar chart, histogram, scatter plot, line chart) to best illustrate the findings.

Exploration Roadmap: Suggest next steps for deeper analysis, including what relationships or visualizations might be most informative.

Rules:

Focus on helping me understand the data first — do not jump to modeling or hypothesis testing.

Use clear, plain language as if explaining to a non-technical researcher.

When possible, suggest or generate visualizations (histograms, bar charts, scatter plots, etc.) to help me see the data better.

Now ask me: “Please paste or upload your dataset so we can start exploring.”P.S. Research Boost AI is live. This is an agentic AI system built on the same principles that I describe here. It turns your scattered notes into well structured manuscript drafts with vetted, high-quality citations— in hours, not weeks. Zero complicated prompting required.

Sign up here to get 5,000 words FREE today:

https://researchboost.com/