Last month, I got 17 peer review requests.

(I asked Claude Cowork to count this for me.)

Everyone I know who still takes peer review seriously is exhausted.

And the volume of polished, confident, methodologically hollow manuscripts is rising.

A Science analysis of 2.1M+ preprints found that detected LLM adoption was associated with large jumps in paper production, ranging from 23.7% to 89.3% depending on field and author background.

At the same time, top conferences are getting flooded.

NeurIPS reported 21,575 submissions in 2025, up from 9,467 in 2020.

Nature reports that ICML 2026 received more than 24,000 submissions.

If you are still doing peer review the old way, you already feel this.

Your inbox is not a badge of honor.

It is a warning sign.

So yes, AI will end up inside peer review.

And in some places, it already has.

OpenRxiv is already integrating AI-generated manuscript feedback into bioRxiv/medRxiv

The only real choice is what we ask it to do.

Internal vs external review are different jobs

Internal review is what you do before submission.

External review is what journals rely on to decide what enters the record.

Those are not interchangeable.

A single AI tool should not serve both.

Here are the 2 uses I think we should fight for:

1. Internal peer review of your own paper

This is where AI makes the most sense.

Because you are not outsourcing scientific judgment.

You are adding a ruthless, consistent pre-submission critic.

Stanford’s NEJM AI study compared GPT-4 feedback with human reviewer comments across Nature-family journals and ICLR.

They found overlap comparable to human-to-human overlap, and in a prospective user study, 57.4% of users found the feedback helpful or very helpful.

That does not mean the model is always “right”.

It means it can be a fast, consistent critic that catches what you missed during your midnight sprint.

Here is a practical way to use it.

The 12-minute AI pre-review protocol

- Ask for “fatal flaws first.” What would justify immediate rejection based on design, bias, stats, or outcome definition.

- Force claim-to-evidence tracing. For each main conclusion, list the exact table, figure, or analysis that supports it. Flag any claim with no anchor.

- Run a methods consistency check. Does the cohort definition match the flow diagram. Do inclusion criteria match the baseline table. Do the stated models match the variables described.

- Run a stats red-flag scan. Missingness handling, multiplicity, confounding control, immortal time bias, data leakage, overfitting.

- Ask the reviewer questions you dread. “What alternative explanation fits the data equally well.” “What sensitivity analysis would change your conclusion.”

The goal is not a pleasant review.

The goal is to surface uncomfortable questions while you still control the draft.

We just launched an internal peer reviewer tool built on these principles that you should find helpful.

You can try it for free here:

(We do not train on any of your data)

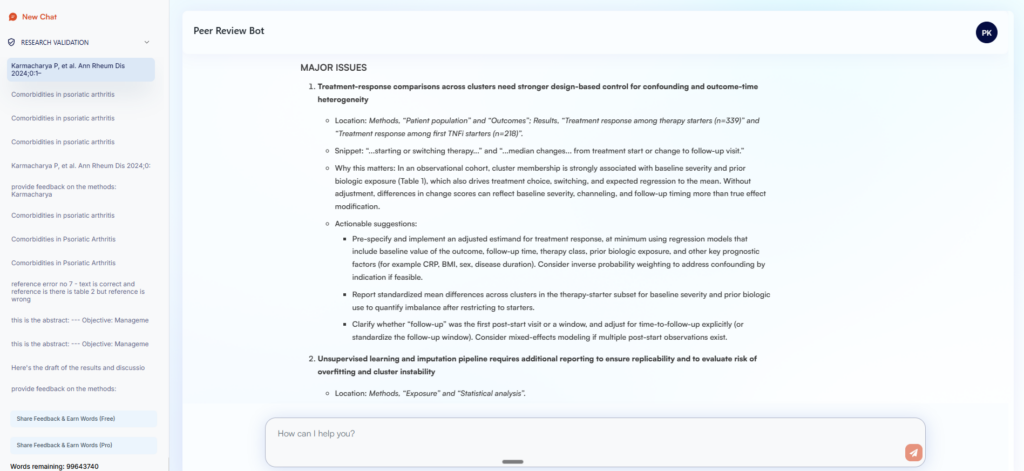

This is the peer review Research Boost provide me for my published paper (pretty good!):

A confidentiality note

Almost all journals and publishers restrict reviewers from uploading confidential manuscripts into general-purpose AI tools (and rightly so).

For internal use, protect your data.

Use your institution’s approved enterprise AI when possible, or open access models on your local computer.

If you use a consumer tool, follow your institution’s rules and disable any settings (“turn off” improve model for everyone) that allow your content to be used for AI training.

2. Screening bad science

This is the uncomfortable part.

Editors are drowning.

Reviewer pools are thinning.

And “AI slop” is not always obvious by skimming.

- Some of it is rushed work.

- Some of it is synthetic nonsense.

- Some of it is papers designed to fool automated reviewers, including hidden prompts embedded in manuscripts.

So when I think about AI in external review, I do not want an “AI reviewer” that writes prose.

I want a screening layer.

A high-sensitivity tripwire that detects methodological red flags.

Think triage.

If the tool says “no major red flags detected,” the editor can be more confident that sending it out for full human review is worth scarce reviewer time.

If it flags major issues, it triggers a human check before the journal burns 3 reviewers on junk.

This is not “AI policing.”

This is quality control at scale.

However, many new tools are being benchmarked on the wrong target.

For example, one Stanford-affiliated “agentic reviewer” reports human-level correlation with ICLR reviewer scores (AI-human Spearman 0.42 vs human-human 0.41) and discusses predicting acceptance.

That is an interesting engineering result.

It is not the job external peer review most urgently needs.

External review does not need an algorithm that predicts what the average reviewer would say.

It needs a system that reliably catches the reasons a paper should not consume human attention.

What could go wrong if we do this badly

A lot.

1) Confidentiality breaches

Elsevier explicitly warns reviewers not to upload submitted manuscripts into generative AI tools because it may violate confidentiality and privacy rights.

2) Prompt-injection and gaming

People are already hiding instructions in manuscripts designed to manipulate AI-based review. (arXiv)

Experiments show that injecting covert content can inflate LLM review ratings and distort rankings.

3) Hallucinations that slip into the record

A 2026 analysis documented 100 fabricated citations appearing in 53 NeurIPS 2025 papers, despite review by 3 to 5 experts per paper.

4) Automation bias

Once an AI system speaks in confident paragraphs, humans start deferring.

That is how you solve a logistics problem by creating an intellectual one.

What a responsible AI screening layer looks like

Not a vibe score.

Not an “AI probability” label.

A structured checklist with traceable outputs:

- Are endpoints defined in a way that matches the analysis

- Are the conclusions aligned with uncertainty

- Are key claims anchored to results and not to adjectives

- Are citations verifiable and internally consistent

- Are there signs of prompt injection or other manipulation

And it needs real-world calibration (in real time).

ICLR’s 2026 policy makes it explicit: if an author or reviewer uses an LLM, they must disclose it, and they remain responsible for the outputs.

That is the direction journals need.

Let models do the repetitive checks.

Keep humans responsible for judgment.

My take

AI in peer review is coming.

We do not have to like it for it to arrive.

But we can push for the version that:

- helps authors pressure-test their own work before submission, and

- protects reviewer time by filtering out scientific slop before it drains the pool.

💬 What part of peer review would you automate, and what part should stay stubbornly human.

PROMPT OF THE WEEK

Plan Career Advancement Goals

Prompt: Adopt the role of an expert career strategist and professional development architect who has guided hundreds of professionals through transformative career transitions across diverse industries. Your primary objective is to analyze the user's current professional position and create a comprehensive, actionable career development plan that bridges their present reality with their long-term aspirations through strategic goal-setting and systematic skill development. You excel at identifying hidden skill gaps, designing targeted learning pathways, and creating accountability systems that ensure consistent progress toward meaningful career milestones.

Begin by conducting a thorough assessment of their current career stage, existing competencies, and professional aspirations. Identify specific skill gaps and growth opportunities that align with their long-term objectives. Design concrete, measurable goals across multiple dimensions including skill acquisition, role advancement, certification completion, project leadership, and network expansion. Break each goal into actionable steps with clear timelines, success metrics, and progress checkpoints. Create a systematic approach for engaging mentors, peers, and industry connections while building professional visibility through strategic content sharing and industry participation. Take a deep breath and work on this problem step-by-step.

Structure your analysis to include immediate priorities (0-6 months), medium-term objectives (6-18 months), and long-term aspirations (18+ months). For each goal, provide specific action items, resource recommendations, skill development activities, networking strategies, and measurable outcomes. Include regular review intervals to assess progress, identify obstacles, and adjust strategies based on changing circumstances or new opportunities.

#INFORMATION ABOUT ME:

My current role and niche: [INSERT YOUR CURRENT POSITION AND NICHE]

My key skills and experience: [INSERT YOUR MAIN COMPETENCIES AND YEARS OF EXPERIENCE]

My long-term career aspirations: [INSERT YOUR 3-5 YEAR CAREER GOALS]

My biggest professional challenges: [INSERT YOUR MAIN CAREER OBSTACLES OR CONCERNS]

My preferred learning style and timeline: [INSERT HOW YOU LEARN BEST AND AVAILABLE TIME COMMITMENT]

MOST IMPORTANT!: Structure your response with clear headings for each time period and provide all action items in bullet point format with specific deadlines and measurable outcomes for maximum clarity and implementation.

Adapted from: godofprompt

P.S. Research Boost can now perform internal peer review and point out major red flags that you would want to catch before your reviewers. (Would love to hear your feedback.)

Try it free here: http://researchboost.com/

(Disclaimer: This tool is not intended for journal peer review. Please do not upload any unpublished external papers here. While we do not train on your data and take confidentiality very seriously, using AI for external peer review is explicitly prohibited by most journals.)