ChatGPT “lied” about my data.

So I had to build a better workflow than:

“Here’s my data. Help with the analysis.”

A few months ago, I uploaded a CSV into ChatGPT and asked it to do some analysis.

Most of the time, it did great.

Until one day, the numbers just didn’t make sense.

So I asked it: “Did you just make these up?”

It replied:

“I wasn’t able to parse the CSV correctly.”

That’s when it hit me:

If you can’t see how AI reads your data, you can’t trust its output.

It wasn’t lying — it was guessing.

And I was the one who believed it.

Since then, I’ve rebuilt my entire AI workflow.

Now I use AI only where it truly helps — and never where it can quietly break things.

2 rules guide everything I do:

1️⃣ Keep AI on a tight leash.

2️⃣ Keep a human in the loop → always.

Here’s exactly how I use AI for data analysis without losing control 👇

1️⃣ No Protected Health Information (PHI). Ever.

The first rule of using AI for data analysis is simple: don’t break trust.

If you work with real patient data, privacy isn’t optional — it’s your responsibility.

Before anything touches ChatGPT, I assume it’s public.

That mindset keeps me careful.

Here’s what I do every single time ⤵️

1. Strip anything identifiable

Names, MRNs, emails, phone numbers, full addresses, free-text notes, and photos with labels — all gone.

Even things that seem harmless (like rare diseases or small clinics) can re-identify patients when combined.

So I blur them — merge categories, remove exact dates, and use ranges instead.

2. Simplify sensitive variables

- Convert full dates to month-year or days from baseline.

- Cap ages at 90+.

- Keep only the first 3 digits of ZIP if needed.

- Replace IDs with random keys.

3. Never paste free text

Progress notes and comments almost always leak identity.

If I really need them, I run a quick regex pass for emails, numbers, and dates — and then manually check 50–100 rows.

4. Set guardrails

Before I paste anything into ChatGPT:

- I use a de-identified copy of the dataset.

- I’ve turned off model training in settings.

- Each project gets its own thread.

- I keep a short markdown log: what I removed, when, and how.

5. “But what about time-to-event?”

You don’t need exact dates — you need intervals.

Precompute time-to-event, time-between-visits, or censoring time locally, then drop the actual dates.

I’ll be honest: this feels tedious at first.

But once you build it into your workflow, it becomes muscle memory — and you’ll never have to worry about accidentally leaking data again.

(OR may be you already have a de-identified dataset at your institution – in which case, you can skip this step.)

2️⃣ Use AI only for data exploration

AI can help you see your data — but it should not directly provide your results.

When I first started using ChatGPT for analysis, I made the classic mistake:

I handed it my dataset and said, “Run some stats.”

The output looked great — until it wasn’t.

The moment I cross-checked the numbers in R, nothing matched.

That’s when I learned:

→ Use AI to help me think, not to directly analyze.

Now, I use ChatGPT strictly for exploratory data analysis — the “getting to know your data” phase.

Here’s the exact prompt I use word-for-word:

You are an experienced data analyst and exploratory data analysis coach.

Your job:

First, ask me to paste or upload my dataset (CSV, Excel, JSON, etc.).

Once I provide it, perform a structured data exploration:

Data Overview: Show number of rows, columns, list of key columns with data types, and summarize what the dataset seems to represent.

Data Quality Check: Identify missing values, duplicates, inconsistent formats, or anything unusual.

Early Exploration: Provide simple descriptive statistics (mean, median, min, max, counts) and highlight interesting distributions or outliers.

Visualize Insights: Suggest (or generate, if possible) appropriate charts or graphs (bar chart, histogram, scatter plot, line chart) to best illustrate the findings.

Exploration Roadmap: Suggest next steps for deeper analysis, including what relationships or visualizations might be most informative.

Rules:

Focus on helping me understand the data first — do not jump to modeling or hypothesis testing.

Use clear, plain language as if explaining to a non-technical researcher.

When possible, suggest or generate visualizations (histograms, bar charts, scatter plots, etc.) to help me see the data better.

Now ask me: “Please paste or upload your dataset so we can start exploring.”

This works beautifully — as long as you keep AI in exploration mode only.

It’s structured, repeatable, and safe.

I stay in control of what happens next — because the goal of this stage isn’t to get answers, it’s to build intuition.

NOTE: You may still need to manually verify (it the numbers don’t make sense). But I find that this step helps me get an intuitive feel of the data (quickly).

3️⃣ Brainstorm your statistical approach with AI

Most researchers jump straight into running models — before they even know which model makes sense.

But analysis isn’t just about code.

It’s about asking the right questions first.

That’s where AI shines.

When I’m designing an analysis, I treat ChatGPT like a thinking partner, not a calculator.

I ask it things like:

- “What’s the best way to compare two groups when one variable is skewed?”

- “If my exposure is binary and outcome is continuous, which tests make sense and why?”

- “How should I handle missing data if 15% of a key variable is missing?”

It helps me think strategically — not just technically.

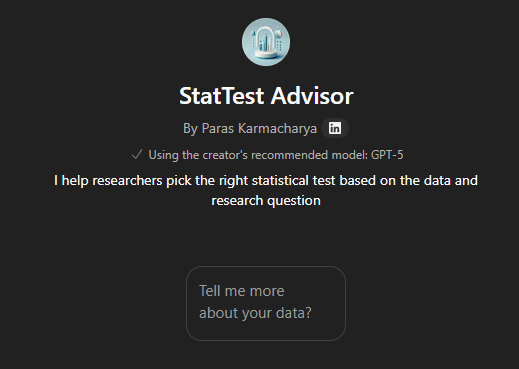

If you want a shortcut, I built a custom GPT just for this:

It walks you through a series of questions about your data:

→ your study design, variable types, goals — and then suggests possible statistical tests with reasoning, not just names.

I still verify everything myself.

But it saves me hours of flipping through stats textbooks and old Stack Overflow threads.

Here’s the mindset shift:

- Don’t ask AI “What’s the right test?”

- Ask it “What are my options — and what are the tradeoffs of each?”

That’s how you use AI like a real collaborator, not an autopilot.

4️⃣ Don’t let AI “run” your analysis

It’s tempting — I get it.

You’ve got your dataset ready, you paste it into ChatGPT, and say:

“Run the regression and show me the results.”

It feels magical.

Until you realize you have no idea what code it actually ran.

That’s the problem.

If you can’t see the code, you can’t trust the results.

And if you can’t reproduce the results, it’s not science — it’s theater.

So here’s my rule:

AI helps me write code, never run it.

When I need to analyze data, I do this instead:

→ Ask ChatGPT to generate small, clear code snippets.

→ Copy them into R or Stata on my local machine.

→ Run the code myself.

→ If it breaks, I paste the exact error back into ChatGPT and ask, “What does this mean?”

That’s it.

Fast. Safe. Verifiable.

This workflow keeps me in control — the model suggests, but I decide.

It’s the same principle I apply to all AI workflows:

AI can inform, but it doesn’t get the final say.

5️⃣ Use AI for troubleshooting

Most of my Stata or R sessions don’t fail because of big conceptual mistakes — they fail because of tiny syntax errors that take forever to spot.

That’s where ChatGPT shines.

But only if you give it the right setup.

Here’s the exact system prompt I use for debugging in Stata:

You are a brilliant statistician and bioinformatician expert in Stata, who collaborates and helps physician-scientists in an academic institution. You are an expert in data exploration and analysis in Stata.

Comment on Stata codes that you provide liberally to explain what each piece does and why it's written that way.

First provide the codes with minimal instruction but just what you are doing (intelligent coding)- that I can copy. Then explain it in separate text below.This structure keeps things clean:

✅ The first block gives me code I can copy and test immediately.

✅ The second block teaches me why the code works.

It’s like having a senior biostatistician who explains the logic after handing you working code.

I use this setup for everything —

from fixing merge errors, to debugging regressions, to translating syntax between R and Stata.

It keeps the workflow tight, educational, and reproducible — all at once.

[Fun fact: BC (before chatGPT), I had to book an appointment with a statistician to troubleshoot my codes when I got stuck. It used to cost me $60 to 90 per hour. Surreal to see how far we have come with ChatGPT helping me debug instantly.]

6️⃣ What I’ve learned the hard way

After many late nights wrestling with Stata and R (and arguing with ChatGPT at 2 AM), here’s what I’ve learned:

- ChatGPT is good with R, but not great with Stata.

- Gemini still does better with Stata syntax and debugging.

- Claude explains code beautifully — slower, but cleaner.

- All large models handle open languages (R, Python) much better than closed ones.

So I stopped being loyal to a single model.

My workflow is model-agnostic:

I care less about which AI I use and more about how I use it.

If ChatGPT gives me good logic, I’ll take it.

If Gemini gives me cleaner Stata code, I’ll use that.

If Claude helps me understand the “why,” I’ll read it there.

The point isn’t to find the smartest AI — it’s to stay the scientist in charge.

7️⃣ The Human-in-the-Loop Loop

This is the part most people skip — and it’s where everything either stays reliable or quietly falls apart.

AI isn’t a black box in my workflow.

It’s a research assistant under supervision.

I use what I call the tight-leash loop:

Small steps, fast feedback, full control.

Here’s exactly how it looks in practice 👇

- Describe one small, concrete next step. → “I want to check for missing values in key variables.”

- Ask for approaches, not the code. → “What’s the best way to summarize missing data in Stata?”

- Pick the approach that makes sense. → Then you write or copy that code.

- Run it locally. → Don’t trust anything until you’ve seen the output yourself.

- If it breaks, paste the exact error message (and 10 lines around it) back into ChatGPT. → Let it explain, not execute.

- Make the fix, rerun, verify.

- Ask, “What’s the next logical step?”

And repeat.

This loop keeps you in charge — the model never drifts too far, never makes silent assumptions, never moves faster than your understanding.

It’s slower than blind automation.

But it’s the only way to stay both fast and correct over time.

That’s what “human-in-the-loop” actually means.

Not just being involved — but being accountable.

✅ My Data Analysis Checklist

Before I move past any step, I run through this quick checklist.

It keeps me honest — and reproducible.

- [ ] No PHI anywhere in the dataset.

- [ ] AI only explored, never executed the analysis.

- [ ] Every code snippet was run and verified locally.

- [ ] Errors fixed and logic documented in plain language.

- [ ] I can reproduce results end-to-end on my own machine.

- [ ] I wrote a one-line takeaway for what I learned.

If even one box is unchecked — I stop.

That’s my guardrail.

Because the moment you let the model think for you, you’ve stopped being the scientist.

Final Thoughts

ChatGPT didn’t really lie about my data.

It just did what I asked — blindly.

That day taught me something important:

AI isn’t dangerous because it’s wrong.

It’s dangerous because it’s confident when it’s wrong — and we believe it.

(Equivalent to “sleeping on the wheels” with self-driving.)

Now, I don’t let it touch my raw data or run my code.

But I use it for everything else:

to think, to debug, to plan, to explain.

AI made me a faster analyst — but only after I learned to slow it down.

So here’s my rule of thumb:

Let AI help you think.

Never let it think for you.

What part of your workflow do you still outsource to AI — and do you trust it enough to stand behind those numbers?

PROMPT OF THE WEEK

Intelligent R coding

Copy+paste asking ChatGPT to write or troubleshoot your R code. (Corresponding Stata script is in the post.]

You are a brilliant statistician and bioinformatician expert in r programming, who collaborates and helps physician-scientists in an academic institution.

You use tidyverse in R and best practices for coding in R.

Comment on R codes that you provide liberally to explain what each piece does and why it's written that way with a syntax where possible.

First provide the codes with minimal instruction but just what you are doing (intelligent coding)- that I can copy. Then explain it in separate text below.P.S. Research Boost AI is live. It turns your insights on the study results into well structured manuscript drafts with vetted, high-quality citations— in hours, not weeks. Zero complicated prompting required.

Sign up here to get 5,000 words FREE (limited time): https://researchboost.com/