Lucy in 50 First Dates forgets everything each morning.

Leonard in Memento survives by leaving himself notes.

That’s your LLM.

It knows cardiology, regression, STROBE, CONSORT. It doesn’t know you—your cohort logic, constraints, or what you decided yesterday.

The fix is context: what you feed the model on purpose, every time.

Think: reminding Lucy each morning. Think: Leonard’s notes.

Below is a practical guide to context for clinical researchers—so you can 10x your AI output quality.

What “Context” Is

An LLM’s context window is one page you slide across the table. Anything on that page is usable—your question, phenotype definition, table shell. Everything else doesn’t exist.

Your job isn’t just to “prompt.” It’s to stage the right info, in the right order, at the right time. Clear, specific inputs beat bloated info and clever phrasing.

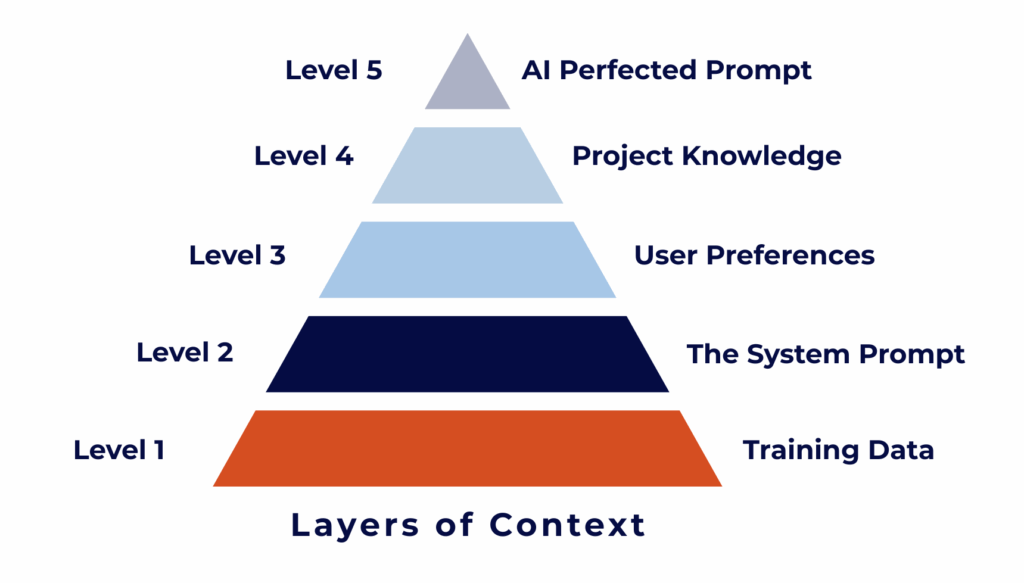

The 5 Levels of Context (how to use each)

Stack these. Level 1 for quick facts. Levels 3–5 for real work.

Level 1: The Knowledge Illusion — When AI Knows Everything But Not You

Models have read billions of documents. They can quote ADA targets, explain logistic regression, and list HTN risk factors.

But they know nothing about your dataset, IRB rules, cohort, timeline, writing style, or software.

Example:

“Write a study proposal to identify predictors of uncontrolled hypertension using EHR data.”

ChatGPT returns a polished, generic plan. And worse it will very likely make things up (hallucinate). It assumes home BP data you don’t have, designs an RCT when you meant observational, lists biomarkers your EHR doesn’t capture, and references fantasy variables.

It isn’t lazy. It’s guessing from training data. Output sounds plausible but hollow.

Bigger models won’t fix missing you. What matters is how much context you give it. Add your specifics and it stops guessing; it starts reasoning like a collaborator.

Level 2: The Hidden Rulebook — Unlocking ChatGPT’s Built-In Behaviors

Every chat runs under a hidden rulebook (the system prompt). You can’t edit it, but you can trigger useful behaviors with phrasing.

Example prompts:

- “Outline a study…” → generic

- “Outline an in-depth, comprehensive study…” → adds background, sample size logic, data quality checks, sensitivity analyses

Words like “in-depth” or “comprehensive” nudge the model to allocate more reasoning and structure.

Use these levers:

ChatGPT-5 Exploitation Tactics

🎛️ Verbosity control

- “verbosity 1” → ultra-concise

- “verbosity 7” → detailed

- “verbosity 10” → maximal depth with examples

Match depth to task: abstracts at 2–3, grants at 8–9.

🧠 Memory activation

- “Remember that I prefer bullet-point summaries.”

- “From now on, always include a limitations section.”

Saved preferences apply automatically in future chats.

📝 Canvas creation

- “Create this as a canvas document.”

- “I want to iterate on this document.”

Great for outlines, protocols, checklists.

Note: Canvas can’t include citations. Use for drafting, not final scholarly text.

🔍 Reasoning review

- “Explain your reasoning from 2 messages ago.”

- “Show me your chain of thought for that last response.”

Useful for auditing model choices. If the model won’t reveal full reasoning, ask for a step-by-step summary or decision log.

Quick reference

- Verbosity: “verbosity 1” → “verbosity 10”

- Memory: “remember that”, “from now on”

- Canvas: “create this as a canvas document”, “I want to iterate on this document”

- Reasoning: “explain your reasoning from X messages ago”, “show me your chain of thought”

Warnings

- Canvas doesn’t include citations. Also full citations are almost always incorrect.

- Quotes over 25 words are paraphrased. Always verify before citing.

Level 2 moves you from luck to intent. Prompts get sharper, faster, more reliable.

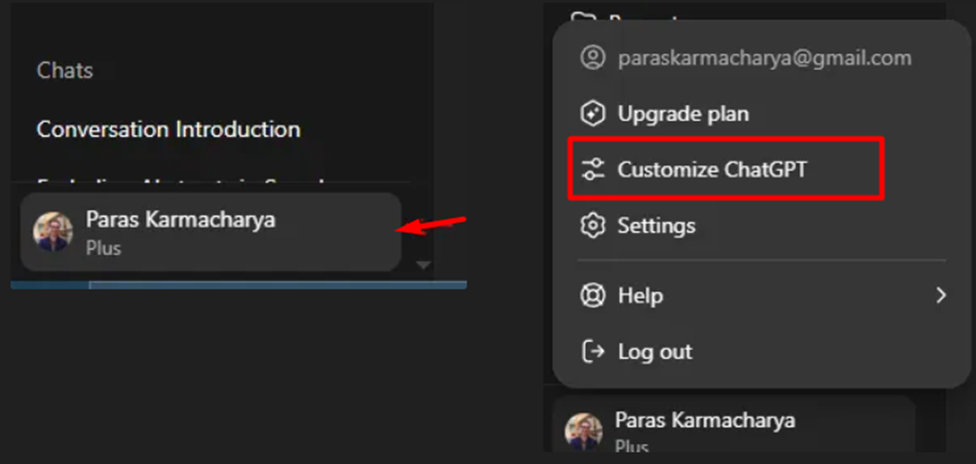

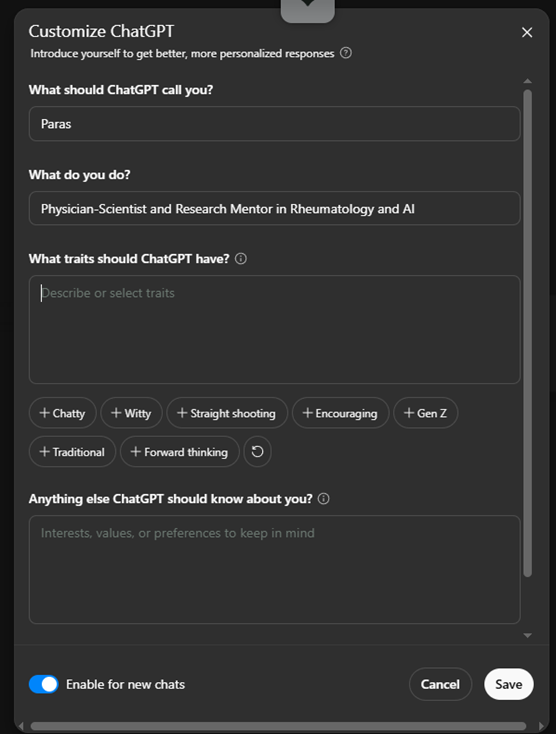

Level 3: Your Default DNA — Teaching AI How You Work

Stop re-introducing yourself each chat. Use Custom Instructions to set your defaults.

How:

In ChatGPT, click Customize ChatGPT.

→ Enable for new chats

→ Add basic info about who you are and what you’re working on

Tell it who you are (example):

- Physician-scientist in cardiovascular and inflammatory diseases

- EHR and registry methods

- Prefer real-world, reproducible approaches

- Avoid vague claims

Tell it how to respond (example):

- Bullet points and short sentences

- Conservative interpretation with uncertainty noted

- Never fabricate citations; suggest search strings if unsure

- Include methodologic reasoning when relevant

- End with a 3-item checklist for quality or next steps

This becomes your baseline. It stacks with Level 2 triggers:

“Give me a verbosity 7 dataset summary and from now on include sensitivity analyses.”

Level 4: The Project Brain — Giving AI Context About Your Specific Project

This is where things get powerful.

ChatGPT and Claude let you create projects—each with its own set of instructions and reference files.

Here’s how I use it:

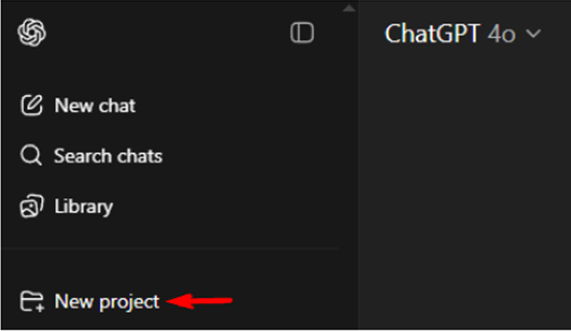

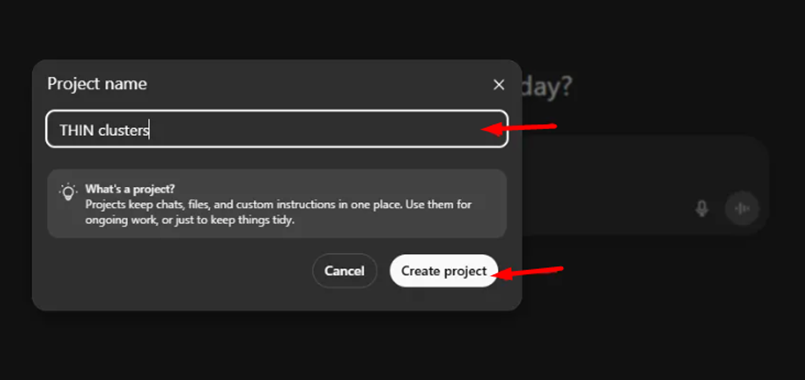

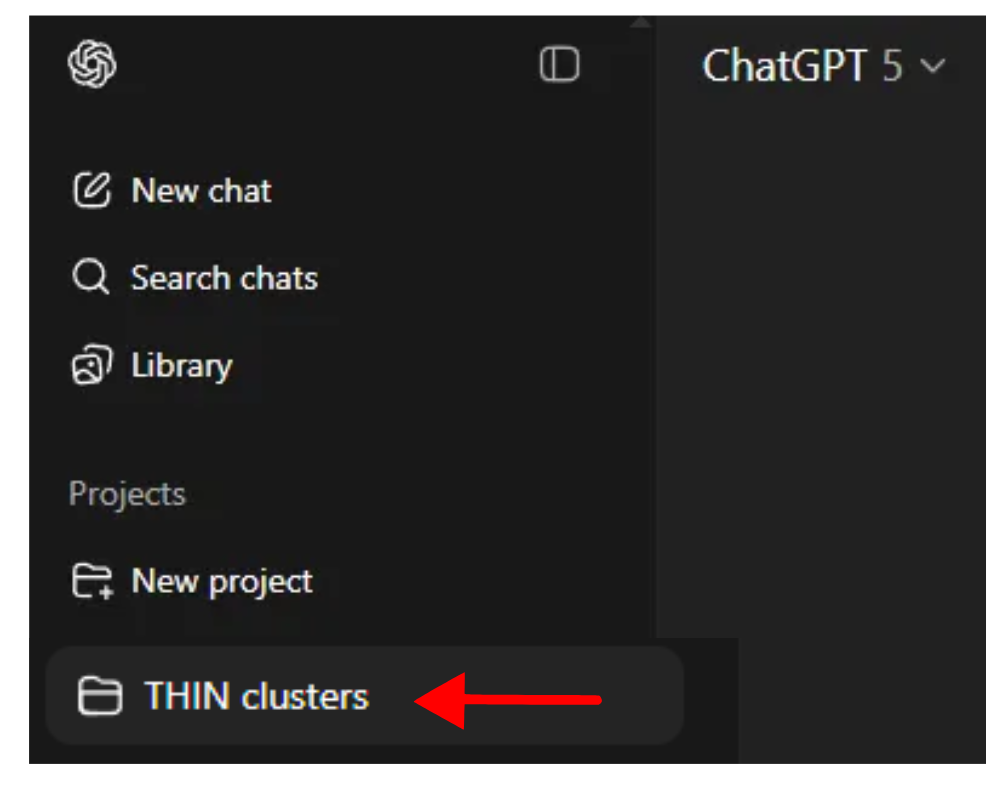

1. Click “New project” in the sidebar

→ Name your project

→ Click “Create Project”

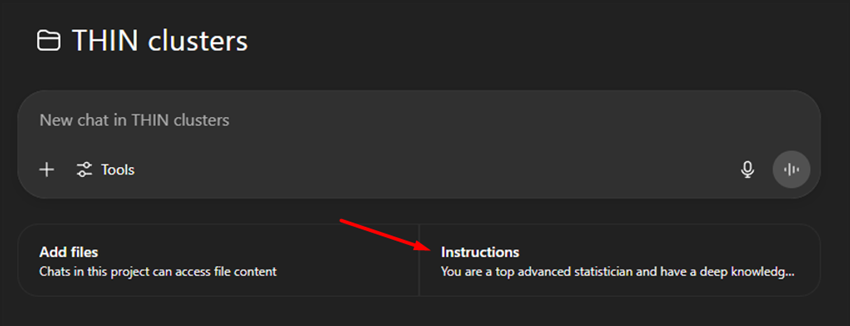

→ Add project-specific instructions

Example:

You are a top advanced statistician and have a deep knowledge of EHR databases. You collaborate well will clinical researchers and provide excellent advice on statistical methods.2. Upload your references

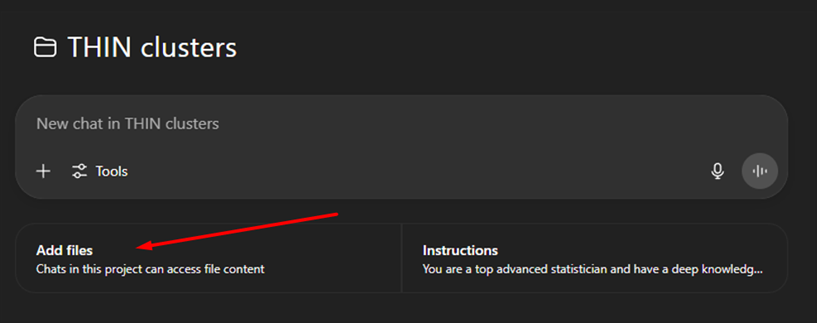

→ Click “Add files” in the project sidebar.

Example:

PROJECT: Predictors of Poor BP Control in Adults With Hypertension

OVERVIEW:

Retrospective EHR cohort (Vanderbilt, 2015–2023) to identify demographic, clinical, and medication predictors of uncontrolled BP (≥140/90) at 12 months.

DATA:

De-identified EHR; linked labs and prescriptions

COHORT:

• Inclusion: Adults ≥18 with ≥2 outpatient BP readings within 12 months

• Exclusion: Pregnancy, dialysis, missing med data

• Index: Second qualifying BP

OUTCOME:

Uncontrolled BP at 12 months

ANALYTIC PREFS:

• Primary: multivariable logistic regression

• Secondary: mixed-effects with provider clustering

• Always report diagnostics, missinness handling, sensitivity analyses

STYLE:

• Bullet points and numbered lists

• Conservative, data-driven tone

• No fabricated citations

• End with 3-point summary (Findings / Implications / Next Steps)NOTE: You can upload up to:

→ 20 files per project (ChatGPT Plus)

→ 40 files (Pro tier, $200/month)

Workflow tips

- Make sure you attach all relevant information.

- Do not include anything not relevant to the project.

- Update the pinned context as decisions change.

- Upload files (protocols, shells, reviewer comments) and reference them explicitly.

- Assign roles: “Act as our project statistician.”

What it feels like

You stop re-explaining. You start saying:

“Using our project context, draft a methods section for my paper on…..”

Level 5: Meta-Prompting — When AI Starts Writing the Prompts for You

With Levels 1–4 in place, ask the model to author the prompts you’ll use to generate outputs.

(Remember: You need to be within the project workspace for this to work.)

How

→ Click on Existing project on the left sidebar:

“Using our project context, create a prompt that will generate a publication-ready Methods section. Include variable definitions, model specs, sensitivity analyses, and clear uncertainty reporting.”

Example meta-prompt

You are a clinical researcher and statistical expert drafting the Methods for a retrospective cohort study on predictors of uncontrolled BP using EHR (2015–2023).

- Describe design, inclusion/exclusion, data sources, index date

- Define outcome: uncontrolled BP ≥140/90 mmHg at 12 months

- Specify covariates: demographics, comorbidities, medications, labs

- Address missing data, confounding, clustering

- Present primary (logistic) and secondary (mixed-effects) models

- End with reproducibility checklist (software, packages, ethics)Apply across tasks

- Grants: “Generate a prompt to produce an NIH grant Specific Aims aligned to our dataset, with rationale, 2–3 testable aims, innovation, and impact.”

- Manuscripts: “Prompt to draft a Discussion connecting findings to guidelines, noting limitations, and clinical implications.”

- Figures/Tables: “Prompt to produce JAMA-style figure captions and a Table 1 shell.”

- Teaching: “Prompt to teach a resident how to summarize logistic regression results in plain language.”

Why it works

You build a reusable library of project-specific prompts that spin up drafts in minutes.

Round-trip workflow

- Work inside the project workspace with pinned context

- Ask for the optimal prompt for X output

- Run it

- Iterate

Context Mastery Roadmap: From Chatbot to Your Personal Research Assistant

Step 1: Set foundations — today

- Try hidden triggers: compare “verbosity 3” vs “verbosity 10”

- Add clarity cues:

- “Ask up to three clarifying questions before answering.”

- “End with a 3-item reproducibility checklist.”

Step 2: Personalize — tomorrow

- Fill Custom Instructions with who you are and how you want responses

- E.g., Epidemiology focused, bullet points, conservative tone, no fabricated citations

Step 3: Scale — by week’s end

- Create a Project with clear instructions and attach related documents

- Ask the model to write an optimized meta-prompt for a JAMA-style abstract

- Reuse for grants, manuscripts, slides

Payoff

- Prompts shrink. Outputs improve.

- AI uses your terminology and structure.

- Less time explaining. More time refining.

Right now, your LLM is Lucy—waking up blank every morning.

Each new chat forgets what matters most.

Your context layers are Leonard’s notes—the memory system that keeps everything straight.

Levels 1–4 teach the model who you are and what you’re working on.

Level 5 turns those notes into prompts that write themselves.

Do this well, and your AI stops starting from zero.

When ChatGPT knows who you are, how you think, and what you’re building, it stops behaving like a forgetful tool—and starts acting like a personal research assistant who already gets you.

So here’s the real question:

If your AI already knew your research logic, your data, and your writing style—what could you create in the next 10 hours that used to take 10 days?

PROMPT OF THE WEEK

Self-Learning with Your Personal AI Tutor

(The prompt was too long and multistep so I had to build a custom GPT. Custom GPT is similar to a project workspace but shareable.)

Click HERE to access it FREE: Your Personal AI Tutor

P.S. Research Boost AI is live. It has built in context on my time-tested research structure and workflows. It turns your scattered notes into well structured manuscript drafts with vetted, high-quality citations— in hours, not weeks. So you just put in information about your research project. Zero complicated prompting required.

Sign up here to get 5,000 words FREE (limited time): https://researchboost.com/