47 tabs. 300+ PubMed hits. A graveyard of PDFs I swore I’d read “later.”

That was my literature review workflow for years.

Grab 2-3 solid review papers. Sit down with a coffee. Read them cover to cover. Then follow the trail. Pull references. Open more papers. Chase more threads.

Repeat until your eyes blur or your confidence cracks, whichever comes first.

It worked. But only sort of.

I’d spend days chasing papers down rabbit holes, convinced I was being thorough, and still walk away with this nagging feeling: Am I missing the one study that matters?

Then AI entered the picture.

And it solved some problems. Fast. Papers appeared in seconds instead of hours.

Semantic search tools could understand messy, human-language questions and return relevant results without exact keyword matches.

But AI does not find the right papers consistently. And worse, it hallucinates.

Speed is useful. Precision is what gets your manuscript accepted.

I’d get results that looked impressive on the surface. Twenty papers in two minutes.

But when I sat down to actually read and evaluate them, half were tangential. Some were outdated. Others lacked the impact factor or rigor I needed for a citation in a peer-reviewed submission.

The Real Problem with Pure AI Literature Search

The issue is not that AI is bad at searching. It is surprisingly good at it.

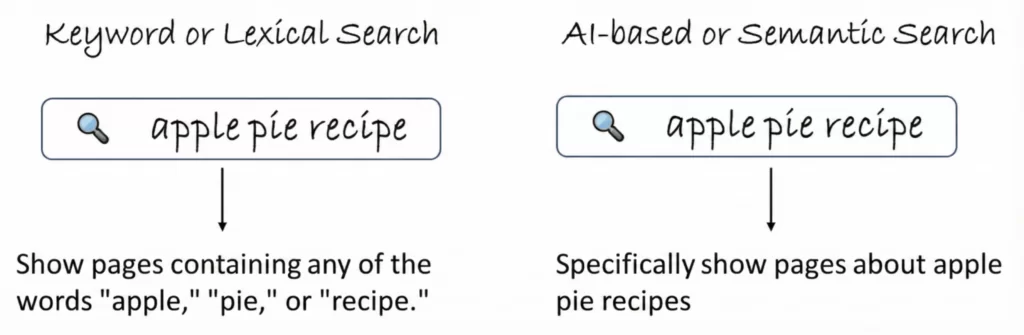

AI or semantic search engines process words and phrases according to their meaning rather than the literal text. So “heart attack,” “AMI,” and “myocardial infarction” all get recognized as related concepts. Traditional keyword-based engines like PubMed require exact matches in indexed fields such as title, abstract, author list, keywords, and MeSH terms.

That’s a huge advantage for discovery. And a real limitation for precision.

Because AI or semantic search has blind spots.

It ranks by relevance to meaning, not by impact, citation count, or methodological rigor. It will always prioritize open access papers because that is what it can see easily. It can surface a perfect thematic match that was published in an obscure journal with 3 citations. And it can miss a landmark trial because the abstract uses different terminology than your query.

Worse, the AI tools researchers increasingly rely on for citations have a documented fabrication problem that should concern every clinical researcher. A 2025 study published in JMIR Mental Health tasked GPT-4o with writing six literature reviews on mental health topics. The result: roughly one in five citations (19.9% of 176) were completely fabricated. Among the real citations, 45.4% contained errors. Overall, only 43.8% of all AI-generated citations were both real and accurate. And among the fabricated citations that included DOIs, 64% linked to real but completely unrelated papers, making the errors harder to spot without manual verification.

In January 2026, GPTZero analyzed over 4,000 papers accepted at NeurIPS 2025, the world’s flagship AI conference, and found hundreds of hallucinated citations across at least 53 papers. These were not rejected submissions. Each one had beaten out over 15,000 other papers and survived three or more rounds of peer review.

The lesson: AI can help you find literature. It cannot be trusted to cite it. Peer-reviewed studies show that even with GPT-4, 18-29 out of 100 citations are likely to be fabricated. And my own tests show that even the state of the art models GPT 5.4 or Opus 4.6 hallucinate on authors, journals, and facts. The problem is structural, not a glitch that updates will fix.

Why Hybrid Beats Both Pure AI and Pure Keyword Search

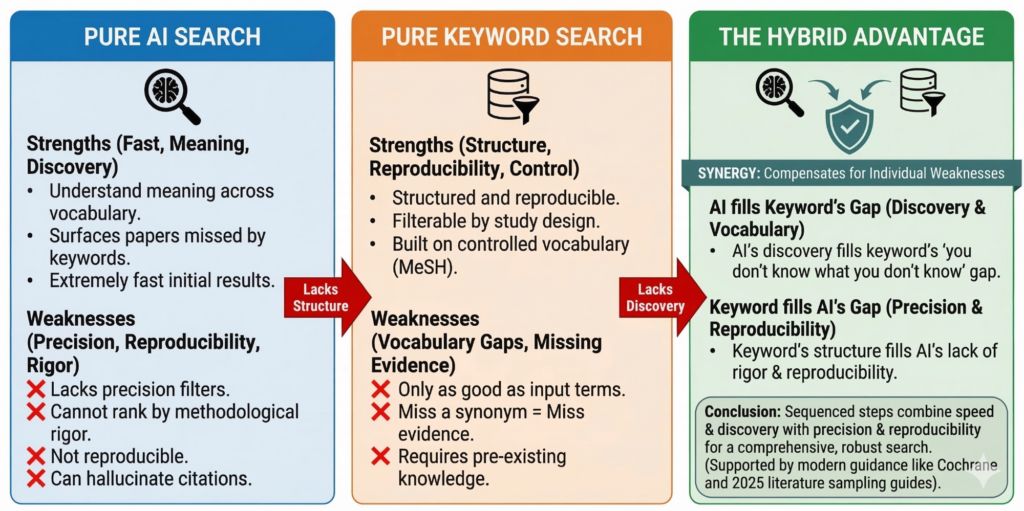

Both AI and keyword search approaches have real strengths and real blind spots.

AI-powered semantic search is fast, understands meaning across vocabulary barriers, and can surface papers you would never find with keywords alone. But it lacks precision filters. It cannot rank by methodological rigor. It is not reproducible. And it hallucinates citations at alarming rates.

Traditional keyword search (PubMed, Embase) is structured, reproducible, filterable by study design, and built on a controlled vocabulary (MeSH) that has been refined for decades. But it is only as good as the terms you feed it. Miss the right synonym, the right MeSH heading, the right trial name, and entire bodies of evidence disappear from your results.

Neither approach alone is enough. But combined, they compensate for each other’s weaknesses.

AI’s strength (vocabulary discovery, conceptual bridging) fills keyword search’s gap (you don’t know what you don’t know). Keyword search’s strength (precision, reproducibility, structured filtering) fills AI’s gap (relevance ranking without rigor assessment, non-reproducible results).

This is not a new idea in principle. The Cochrane Handbook has long recommended supplementary search methods alongside database searching. And the Birmingham City University guide to AI in literature searching cites published guidance confirming that AI tools should be used as a further search approach, in addition to the more traditional search methods.

What is new is doing this systematically.

A comprehensive 2025 guide to literature sampling published in Technological Forecasting and Social Change analyzed 404 systematic reviews from top-tier journals and found that inadequate guidance and overreliance on unfounded “best practices” often leads to suboptimal search strategies and incomplete reporting. The fix the authors recommend: sequenced, prioritized steps that combine scoping, searching, and screening into a structured workflow.

That’s exactly what our hybrid process does. AI scouts the landscape. You extract the vocabulary. Keyword search locks in the evidence.

Here’s exactly how I do it.

My Exact 3-Step Hybrid Literature Review Process

This is the workflow I use for every new project. It combines AI’s semantic reach with PubMed’s precision. It takes me from a vague research question to 40-60 high-quality papers in under an hour.

STEP 1: Deploy the AI Scout

Goal: Find 5-10 “signal” papers fast.

I take a messy, real-world clinical question and drop it into a semantic search tool. No Boolean logic. No MeSH terms. Just the question I actually want answered.

Something like: “Does intensive blood pressure lowering benefit people over 80?”

What AI gives back is not a textbook answer. It is a map.

- HYVET RCT (even though it never uses the word “intensive” in its abstract)

- SPRINT subgroup analysis (even though they say “older adults,” not “octogenarians”)

- Meta-analyses on BP targets in elderly populations

- Cohort studies linking BP control to stroke risk in the very old

Here’s why this matters: When I searched PubMed literally for "intensive blood pressure" AND "octogenarians", HYVET never showed up. AI surfaced it in 10 seconds.

The reason is architectural. Traditional PubMed search seeks exact matches for the input query in indexed fields. If the term “octogenarians” does not appear in the title, abstract, or MeSH terms of a paper, PubMed will not return it. Semantic search locates texts that are semantically related to the query, even when they do not contain the exact query terms. That’s the difference between matching words and matching meaning.

The Birmingham City University guide to AI literature searching captures this well, noting that tools like Connected Papers and Research Rabbit allow you to search the references of eligible articles without needing to know all the keywords and alternative terms.

This step is about speed and vocabulary discovery. Not perfection.

STEP 2: Mine the Language

Goal: Extract the field’s actual vocabulary so you can search like an insider.

This is the step most researchers skip. And it is the most important one.

From those 5-10 signal papers, I pull four categories of language:

Population language. Terms like “aged 80 and over,” “very elderly,” “frail elderly,” “older adults.” I once searched for “octogenarians” and missed half the literature because most authors never use that word.

Intervention language. Terms like “tight control of hypertension,” “SBP less than 120 mmHg,” “aggressive BP lowering.” SPRINT never uses “intensive BP lowering” in its abstract. If you don’t include “SBP less than 120” or “tight control,” you will not find it.

Entity language. Trial names like HYVET, SPRINT. Outcome terms like “stroke,” “CV events,” “mortality.”

MeSH terms. This is where things get powerful.

Scroll to the bottom of a PubMed abstract page. You will find a list of MeSH terms that indexers have assigned to that paper. PubMed indexers typically assign 10-12 MeSH terms to describe every indexed paper. These are not random tags. They come from a controlled vocabulary of roughly 30,000 pre-determined terms maintained by the National Library of Medicine, arranged in hierarchical tree structures and updated every year to capture new developments.

The critical advantage: when searching with MeSH terms, you are not searching “freetext.” You will not have to worry about word variations, word endings, plural or singular forms, or synonyms. The MeSH system pulls together all articles on a concept regardless of the terminology used by the author.

For HYVET, I discovered these MeSH terms:

- “Hypertension/drug therapy”

- “Aged, 80 and over”

- “Blood Pressure/drug effects”

I saw “Aged, 80 and over” in MeSH and realized I had never included that term in my queries. Adding it doubled my retrieval.

This is the real currency of a good literature review. Not finding more papers. Finding the right terms so that your search captures the papers that matter.

STEP 3: Drill Down with Targeted Keyword Search

Goal: Build a reproducible, high-precision paper set.

Now I build a PubMed search string using everything from Step 2.

Here is what that looks like in practice:

("hypertension"[Title/Abstract] OR "high blood pressure"[Title/Abstract])

AND

("tight control"[Title/Abstract] OR "intensive therapy"[Title/Abstract] OR "SBP <120"[Title/Abstract])

AND

("aged, 80 and over"[MeSH] OR "very elderly"[Title/Abstract] OR "HYVET"[Title/Abstract])

When I run this, all pivotal trials show up. Additional cohort studies and meta-analyses appear. Noise drops dramatically.

I usually land with 40-60 high-impact papers in 10-12 minutes.

This is the moment the review clicks.

Notice what happened here. Step 1 (AI) found papers I would have missed with keywords alone. Step 2 (human expertise) extracted the vocabulary the field actually uses. Step 3 (keyword search) built a reproducible, verifiable search that captures the evidence base with high precision and low noise.

Each step compensates for the weaknesses of the others.

AI’s weakness is precision and reproducibility. Keyword search’s weakness is vocabulary gaps. Human expertise’s weakness is speed. Combined, they cover all 3.

Now you have:

✅ A clean evidence set

✅ A reproducible search your co-authors can verify, your PRISMA diagram can reference, and your grant reviewers can trust

✅ Confidence that the landmark studies in your area are accounted for

You Still Have to Read the Papers

This is the part no amount of AI will bypass.

AI can surface papers. It can summarize them. It can tell you which ones cite which. But it cannot evaluate whether the methodology holds up for your specific question. It cannot decide if a study’s population matches yours closely enough to cite. It cannot feel the gap in the literature that your project fills.

For any research paper, you must do a complete literature search and include relevant information, even if it does not support your conclusion or the novelty of your work. Editors and reviewers will do their own literature search, and omitting publications will prove difficult to overcome.

That kind of comprehensive, judgment-laden literature engagement cannot be delegated to AI. It requires reading. It requires thinking. It requires the clinical context that only a researcher brings to the table.

Ethan Mollick captures this beautifully in Co-Intelligence when describing his own process. He writes that reading papers was often a Centaur task, one in which he knew the AI exceeded his capabilities in summarizing, while he exceeded it in understanding.

That is the right mental model. AI compresses the scouting phase from days to minutes. But the reading, the thinking, the evaluating. That is still yours. And it should be.

We Built This Into Research Boost

We have been quietly building and perfecting this hybrid algorithm over the past year.

The process described above, the AI scout to vocabulary extraction to targeted keyword retrieval, is exactly what Research Boost automates. We combine AI semantic search with structured keyword retrieval to give you both speed and precision in a single workflow.

Don’t take my word for it.

Try it here FREE: researchboost.com

I created a short demonstration of how it works here:

💬 What’s your biggest frustration with AI literature reviews right now?

⚠️ Disclaimer: The generated comprehensive internal literature synthesis is for your research project and is not intended for journal submission.

Images in the article were made iteratively with nano banana pro.

PROMPT OF THE WEEK

Proofreader

You are a skilled proofreader and editor with a keen eye for detail and a mastery of the English language.

Your task is to thoroughly review the provided text, identify any errors or areas for improvement, and provide a polished, error-free version.

<input>

Text: ${inputVariable1}

</input>

Relax, take a deep breath, and follow this process step-by-step:

Step 1: Read through the entire text to get a general sense of its content, style, and intended meaning.

Step 2: Re-read the text slowly and carefully, looking for any of the following:Spelling errors Grammar mistakes (e.g. subject-verb agreement, pronoun case, verb tense) Punctuation errorsAwkward phrasing or sentence structureRedundancy or unnecessary wordinessInconsistencies in style or tone

Step 3: Note each error or suggestion for improvement. For each, provide:The original phrase An explanation of the issueYour suggested correction

Step 4: After listing all suggested changes, re-read the original text, applying your edits to create a polished, error-free version.

<constraints>

Preserve the original meaning and intent of the text.

Do not make changes that alter the core message.

Ensure changes improve clarity, concision, and readability.

Do not introduce any new errors in your revised version.

Provide explanations that are clear and easy for a general audience to understand.

Avoid overly technical grammatical terms.

</constraints>

Important - make sure your output closely follows this writing style: <writing style>

Use clear, direct language and avoid complex terminology.

Aim for a Flesch reading score of 80 or higher.

Use the active voice.

Avoid adverbs.Avoid buzzwords and instead use plain English.

Use jargon where relevant.

Avoid being salesy or overly enthusiastic and instead express calm confidence.

</writing style>

Output format:

Text Review Summary:

[Provide a 1-2 sentence overview of the general quality of the writing and the main areas for improvement]

Suggested Changes:

Original: [quote the original phrase]

Issue: [explain the problem]

Correction: [provide your suggested edit] Original: ...

[continue listing suggested changes]

Revised Text: [Provide the full text with all suggested edits applied]