At this year’s annual rheumatology conference, every AI conversation eventually circled back to the same question.

In hallways.

Over coffee.

In those between-session chats where the real discussion happens.

“Which model are you using for Research Boost?”

“And how does it do on the benchmarks?”

(For context, Research Boost is an AI agentic tool that I developed for brainstorming research questions and for academic writing. I provide this example here, not to promote but as a real-world example of a clinical research tool that I have spent 1000s of hours on improving.)

It’s a completely reasonable way to think about AI.

If you’re not living inside this stuff every day, public benchmarks are one of the few visible signals you can grab onto.

GPT-5.1 beats X on math. Gemini 3 beats Y on reasoning. The curves all point up and to the right.

But there’s a huge gap between those charts and what we actually care about in clinical research.

When I’m wearing my physician–scientist hat, I’m not asking:

- “Can this model hit 92% on some PhD-level STEM exam?”

I’m asking:

- “Can I trust this to help me draft a methods section without sneaking in errors?”

- “Does it hallucinate trial details in subtle ways?”

- “Does its writing fit the tone I’d be comfortable submitting under my name?”

Once you’re already using frontier models, leaderboard benchmarks are necessary but nowhere near sufficient.

To really understand if an AI belongs in your research workflow, you need 2 extra layers:

- Benchmarking on vibes – your expert, intuitive “feel” for how a model behaves on your work.

- Benchmarking in the real world – systematic tests on real clinical research tasks, inside real workflows.

Benchmarks tell you the model is powerful.

Vibes and real-world tests tell you if it’s safe and useful for you (and your specific use case).

The benchmark race is real (and mostly beside the point)

Let’s start by giving the new models, that dropped this month, GPT-5.1 and Gemini 3 their due.

On paper, they’re both absurdly capable:

- GPT-5.1

- Strong performance on math and coding benchmarks

- Adaptive “think harder when needed” behavior

- Excellent at building multi-step, tool-using agents (exactly what we need for research workflows)

- Gemini 3 Pro

- Record-breaking scores on ultra-hard reasoning and knowledge tests (GPQA Diamond, ARC-style puzzles, etc.)

- Impressive spatial reasoning and long-context retrieval

- Trained at massive scale on Google’s own hardware, with multimodal and huge context windows baked in

If you look only at the public plots, the story is:

- Everything is going up and to the right

- All the big models cluster in the “90%+ on hard exams” region

- The exact ordering (GPT vs Gemini vs Claude) changes per benchmark, but they all look terrifyingly strong

Those numbers are genuinely useful when you’re deciding:

- “Should I rely on a small open model vs a frontier model?”

- “Is this thing even in the same league as human experts on generic tasks?”

But they don’t answer the questions that matter on a weeknight when you’re trying to finish a draft:

- “Will this model describe my clinic cohort in a way that a reviewer can reproduce?”

- “Will it flatten a nuanced limitation into a throwaway sentence?”

- “Will it quietly invent a subgroup analysis that never actually happened?”

For that, you have to go beyond the leaderboard and start interviewing the model for the job you actually need done.

Benchmarking on vibes

In the broader AI world, “benchmarking on vibes” usually means giving every new model the same quirky task: draw a pelican on a bike, write a weird short story, build a tiny game. Then, seeing how it “feels”.

I do something similar, but my “vibe bench” is more from the perspective of clinical research.

When a new model drops, I don’t start with MMLU or GPQA.

I start with whatever messy thing is already open in my manuscript window.

For example:

- “Rewrite this methods paragraph for a rheumatology journal. Keep all numbers and effect sizes. Shorten by 20%. Make it clear and precise.”

- “You are a grumpy senior methods reviewer. Highlight any sentence in this Methods section that is vague, underspecified, or likely to trigger a reviewer comment. Suggest a fix.”

- “Given this abstract + table, list 3 plausible alternative explanations for the association that are not confounding by measured variables.”

Within 10–15 minutes, each model reveals its “personality.”

What GPT 5.1 model feels like in practice

When we were testing GPT-style models for Research Boost, the pattern was very consistent:

- Huge strengths

- Great at writing and refactoring R / Python code

- Strong at multi-step reasoning and tool orchestration

- Very good at managing long conversations and context

- But also a very distinct stylistic quirk

- A deep love for polished, slightly flowery language

We really tried to tame this.

We talked to the model about:

- parallelism

- ghost nouns

- avoiding unnecessary adverbs

- keeping sentences short and direct

We gave before/after examples. We built strict system prompts.

And it would still give us:

“This comprehensive, robust observational cohort study…”

When what I actually wanted was:

“We conducted a retrospective cohort study…”

It’s not “wrong.”

It just not how I want my methods section to read—or how I want my mentees to write.

That’s a vibe.

What Gemini 3 Pro feels like in practice

Earlier versions of Gemini were almost the opposite:

- The writing was quietly bland

- Short sentences

- Neutral tone

- Very few flourishes

While for creative writing, this was not so great, for medical writing- this was a great feature, not a bug.

It felt like a diligent research assistant summarizing trials for journal club—clear, a bit dry, and easy to trim or adapt.

With Gemini 3 Pro, that changed (sort of).

- It became much stronger at creative writing and expressive language

- Great for essays, teaching material, or general science communication

But it still remains my preferred choice for writing manuscripts. It can write without the literary flare- exactly what we want to avoid in medical writing (CLEAR >CLEVER).

But when we used Gemini in a search + evidence extraction role inside Research Boost, a different vibe emerged:

- Trial names sometimes morphed into similar-but-not-quite-right versions

- Guideline years shifted

- Effect sizes were occasionally paraphrased into something almost correct, but not precise enough for a results table

For a casual blog post, that might be acceptable.

For a manuscript, it isn’t.

That’s another vibe.

Why vibes matter (and where they fall short)

Up close, on real clinical research tasks:

- GPT-5.1 feels like a brilliant, overpolished writer who needs constant toning-down

- Gemini 3 feels like a powerful, slightly more adventurous colleague who sometimes “rounds off” details in ways that make me nervous

Those intuitive impressions are incredibly useful.

They help you very quickly answer questions like:

- “Do I trust this model with my first draft?”

- “Would I let it write the limitations section unsupervised?”

- “If a tired fellow used this assistant at 11 pm, would it drag them toward clarity or toward confusion?”

But vibes are also:

- subjective

- dependent on your own preferences and blind spots

- sensitive to which exact prompt you used that day

So vibes are step 1.

Step 2 is treating your model like a serious job candidate and benchmarking it in the real world.

Benchmarking in the real world (for your workflow)

This is where we borrow the spirit of something like OpenAI’s GDPval and adapt it to clinical research.

GDPval, in short, did this:

- Industry experts with ~14 years of experience created complex, realistic tasks that usually take 4–7 hours

- Several AI models and human experts did those tasks

- A separate group of experts graded the outputs blind (no idea who was human vs AI)

That’s the basic pattern we want, but in our world.

Think of it as:

Interviewing the model for a specific role in your research group.

Here’s roughly how we’re doing it inside Research Boost, and how you can apply the same idea even if you’re just using ChatGPT / Gemini directly.

1️⃣ Start with real tasks, not toy prompts

The worst way to evaluate a clinical research model:

“Explain p-values in simple language.”

The better way:

“Take this actual draft methods section from our PsA cohort study and:

– clarify the inclusion/exclusion criteria

– make the cohort selection steps reproducible

– keep the total length within ±10%.”

Other good real-world benchmark tasks:

- Turn bullet-point notes from a mentee into a first-draft Introduction

- Tighten a Results paragraph while preserving every number and confidence interval

- Summarize 8–10 key papers into a focused, reference-rich background paragraph (no invented trials)

- Rewrite a limitations section to be more honest and specific (no generic “residual confounding may exist” without detail)

Use your actual work:

- real drafts

- real tables

- real messiness

That’s where models either prove themselves or fall apart.

2️⃣ Decide what “good” looks like before you see outputs

Otherwise, you’ll unconsciously reward whichever model sounds the most confident.

For manuscript-related tasks, my rubric usually includes:

- Structure

- Does it respect IMRaD? and respective study type guidelines such as STROBE?

- Do paragraphs flow in a logical order?

- Argument quality

- Are claims explicitly tied to data?

- Does it avoid over-claiming from observational analyses?

- References & facts

- Are the cited papers real and relevant?

- Do sample sizes, effect sizes, and follow-up times match the originals?

- Any fabricated guidelines or trial names?

- Hallucination risk

- Any confident, specific statements that you can’t trace back to the input?

- Clarity

- Can a tired reviewer understand this in one read?

- Are sentences short, concrete, and unambiguous?

Give each criterion a 1–5 score.

Define the rubric before you look at anything the models produce.

Now you’re benchmarking against standards, not just vibes.

3️⃣ Run frontier models head-to-head (ideally blind)

At this point in history, the “frontier tier” for most workflows is some mix of:

- GPT-5.1

- Gemini 3

- Claude Opus 4.5

(Grok and other models outside of the US)

I recommend testing at least the 3 I mention here.

Give them the same tasks with the same instructions.

If you can, hide which output came from which model and score them using your rubric.

Congratulations, you’ve just built:

A tiny, domain-specific GDPval for your lab.

You’re no longer asking:

- “Is Gemini 3 four points better than GPT-5.1 on some PhD-level benchmark?”

You’re asking:

- “On our actual PsA methods section, which model produces a clearer, more accurate, more reviewer-friendly draft?”

That’s the only question that matters.

4️⃣ If you use AI agents (or an agentic architecture), test both the parts and the whole

As an example, Research Boost isn’t a single chatbot. It’s a small team of:

- an evidence agent – searches, screens, and extracts from papers

- a key-elements agent – pulls out design, population, exposure, comparator, outcomes, stats

- a writer agent – drafts IMRaD sections

- an editor agent – tightens language and aligns with journal expectations

- plus some helpers for outlining, critique, and revision loops

Benchmarking that ecosystem has 2 layers:

I. Agent-level benchmarking

For each agent, ask:

- Search/evidence agent

- Does it miss key trials?

- How often does it hallucinate details (e.g., wrong year, wrong dose, invented outcome)?

- Extractor agent

- Does it reliably capture N, primary outcome, follow-up, effect size, and key inclusion criteria?

- Does it mislabel exposure or comparator?

- Writer agent

- Does it preserve every number and confidence interval?

- Does it respect requested style and length?

Here, you can literally use a spreadsheet and treat it like a small validation study.

II. System-level benchmarking

Then zoom out and ask:

“Given a vague question like

‘What’s the evidence for TNF inhibitors vs IL-17 inhibitors in severe PsA with axial involvement?’

can the full pipeline produce something I’d be willing to sign after editing?”

Look at:

- how errors propagate across steps

- where hallucinations leak through

- where the tone drifts away from your voice

Some models look great in isolation but behave very differently once you chain them together.

(We learned that the hard way.)

5️⃣ Run a small real-world pilot (this is where truth lives)

The final benchmark is always the same:

How does this system behave on a real project, with real people, under real constraints?

Pick one manuscript:

- Ask your team (or mentees) to deliberately use the AI for:

- outlining

- drafting one section

- revising one section

- Then collect very specific feedback:

- Which AI-generated passages did they keep?

- Where did they instinctively overwrite everything?

- Where did they get nervous about subtle errors?

- Where did they feel relieved that the AI handled something well?

This is basically post-deployment monitoring for a writing tool.

We already do this for clinical prediction models and risk scores.

We just haven’t consistently applied the same mindset to manuscript assistants.

But if an AI system is helping shape the way evidence is framed and argued, it deserves exactly that level of rigor.

So what do you actually do next?

If you’re a clinical researcher thinking about using AI for writing, here’s a simple plan:

- Use public benchmarks only to choose your tier

- Decide: frontier model vs small local model.

- Don’t obsess over tiny differences between top models.

- Build a small “vibe bench” from your real work

- 3–5 tasks pulled straight from your current projects.

- Run GPT-5.1, Gemini 3, etc.

- Notice how each feels in terms of tone, faithfulness to data, and tendency to oversell.

- Turn vibes into a basic rubric

- Structure, argument quality, references, clarity, and hallucinations.

- Score blindly if you can.

- If you use agents, evaluate parts and whole separately

- Search, extraction, writing, editing.

- Then the full pipeline on a realistic question.

- Do one real-world pilot and listen carefully to the friction

- Where users trust it vs override it.

- Where they speed up vs slow down.

- That’s your real benchmark.

Benchmarks are incredibly valuable.

They tell us that we aren’t dealing with toys anymore—that GPT-5.1, Gemini 3, and Claude Opus 4.5 really are operating at or above human level on many formal tests.

But:

Your manuscript doesn’t live on MMLU. It lives in peer review.

The practical question isn’t:

- “Which model topped the latest leaderboard?”

It’s:

- “Does this model make me a sharper, more careful clinical researcher—or am I now babysitting a very fast, very confident intern?”

Public benchmarks can’t answer that.

Your vibes and your real-world benchmarks can.

PROMPT OF THE WEEK

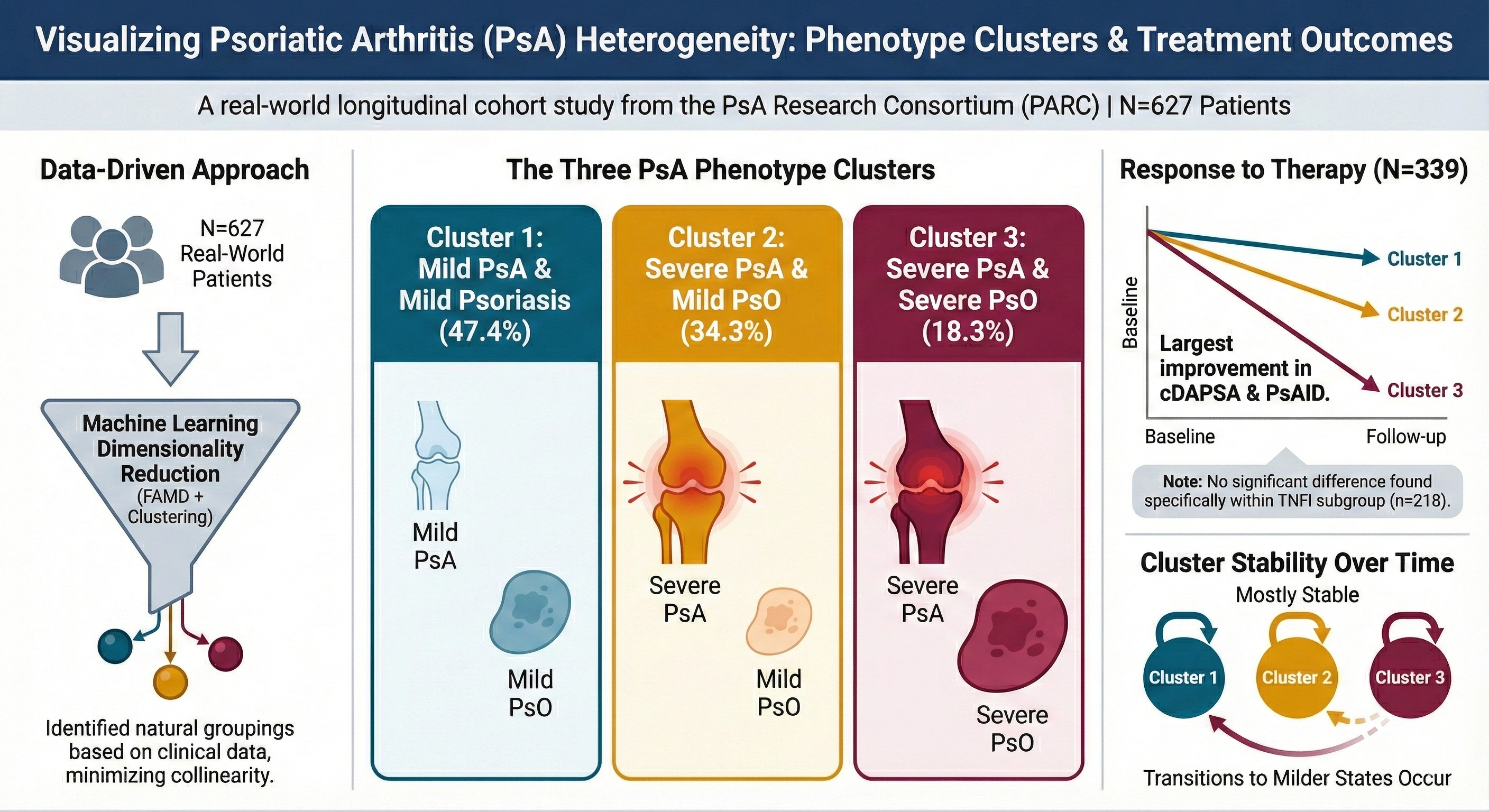

Speaking of hiring, you definitely want to hire this scientific illustrator.

It is wild that we have gotten this far this fast.

Here’s how to access Nano-Banana (the new one) before copying my prompts:

- Go to gemini.google.com.

- Click on “Tools” and select “ Create images”.

- Make sure to turn on “Thinking” on the bottom right.

Copy and paste this prompt:

You are a **Scientific Infographic Designer**.

Create a **journal-ready infographic** that summarizes and visualizes the study.

**Workflow (do both steps in one go, without asking questions):**

1. **First output a JSON plan** for the infographic with this structure:

`{

"title": "",

"subtitle": "",

"core_message": "",

"sections": [

{

"name": "",

"content_summary": "",

"visual_type": "",

"layout_position": ""

}

],

"layout": {

"overall_structure": "",

"reading_order": ""

},

"color_palette": {

"primary_colors": [],

"accent_colors": [],

"accessibility_notes": ""

},

"typography_notes": "",

"iconography_notes": "",

"callout_boxes": []

}`

1. **Then immediately create the infographic design** based on that JSON, describing:

◦ Layout (top/middle/bottom or left/right structure)

◦ Main visual elements (blocks, arrows, icons, timelines, clusters, etc.)

◦ Text content for each section (short headings + 1–2 lines)

◦ How colors are applied using good **color theory** (contrast, harmony, journal-safe, red/green–safe)

◦ How visual hierarchy is implemented (what pops first, second, third)

**Design principles:**

• Clean, balanced, **minimal** layout

• Strong visual hierarchy (clear headline, subheads, small details)

• Harmonious, high-contrast, **journal-safe** color palette

• Accessible and uncluttered; no dense paragraphs

• No footnotes or long legends inside the graphic

**Input abstract/paper:

---**

`[PASTE ABSTRACT HERE]`

...

4. Say “ok” if the description looks ok to you. Otherwise, ask it to edit.

5. It will then output the image.

(Below is what I got after copying and pasting my recent abstract- I would have paid $$$ for this.)

If you want to revise any part (colors, icons, shapes, emphasis), just tell it. Nano-Banana is shockingly good at targeted edits.

⚠️ Word of caution: Most journals do not allow AI graphics (yet). This is currently a strong stance by the journals, but as with AI writing the stance is likely going to change as models get better & better.

P.S. We are rolling out important updates to Research Boost this week – you can expect to see even better quality and faster outputs from your favorite tool. Please do provide your feedback by replying to this email. Your suggestions will shape the future of academic writing.

(I read all replies personally.)