I’ve got grand rounds coming up in a weeks. After that, a state-of-the-art lecture on psoriatic arthritis at a society meeting. Both rooms are smart, busy, and built to spot a weak argument.

Most people assume I use AI to write these talks.

I don’t (at least not in a way they think).

I use AI to find what’s missing, what won’t land, and where the logic breaks.

The talk itself still has to come from me. The clinical insight, the patient story, the read of the room. AI can’t do those. What it can do is sharpen the version of you that walks up to the podium.

Here’s what I’ll cover today:

- Why AI works better as a critic than a co-author

- The virtual audience trick for academic rooms

- How I now run literature review for talk prep

- The five-step playbook you can use this week

1. AI as critic, not co-author

Most researchers reach for ChatGPT and ask it to “write me a 30-minute talk on X.”

The output is fine. Generic. Forgettable.

It also sounds nothing like you.

I work the other way around. I draft my outline by hand first. Then I hand it to Claude and ask the kinds of questions a good co-author would ask:

- Where does this argument fall apart?

- What’s the strongest counter a skeptical reviewer would raise on this slide?

- Which of these claims would a methodologist push back on?

- Is the gap I framed in slide 3 actually a gap, or am I missing a recent paper that already answered it?

Ethan Mollick describes this same shift in Co-Intelligence. He works with AI as a coeditor, going back and forth, refining. He even gives the model distinct critic personas with clear roles. One of his is a pompous, simplifying editor who pushes him to cut what doesn’t earn its place. Another is an everyperson reader who tells him when he’s lost in the weeds.

That is the shift. From writer to reader-stand-in.

For a scientific talk, this matters more than for almost any other kind of presentation. Your audience is trained to find the gap. Charles Stein puts it bluntly in Not Discussed: question time is risky because you are not in control of the agenda, and anyone in the room can ask the question that exposes a flaw.

You want to be the one who finds those flaws first.

2. The virtual audience for academic rooms

Beyond critique, I build a virtual audience before I ever step on stage.

For a recent talk to a mixed rheumatology group, I described 4 people to Claude in detail:

- A senior methodologist who does not care about my disease but cares deeply about study design

- A junior fellow who has never seen a patient with psoriatic arthritis

- A skeptical PI in an adjacent field who will ask “so what?”

- A statistician who will catch any sloppy interpretation of effect sizes

Then I dropped my outline in and asked: how does each of these four react to slide 7? To my main message? To the limitations slide?

The output is not perfect. But it gives me a usable map of where I am likely to lose people, where I am hand-waving, and where I need to slow down.

This squares with what Stein argues across his chapter on talks: most academic audiences are diverse, with experts and novices in the same room. You hold the room by framing the big picture before the minutiae. Skip that, and you lose half the audience in the first few sentences.

The virtual audience helps me catch when I have skipped it.

3. Literature review with Research Boost

This is the part of my prep workflow that has shifted the most in the last year.

I keep my own running collection of papers in my field. I review the literature every Saturday. It is a ritual. PubMed alerts deliver three to five new papers most weeks.

But my collection has blind spots. I follow the people I follow. I read the journals I read. Adjacent fields and smaller journals slip past me.

So I now use the deep literature review feature in Research Boost for any upcoming talk.

I find this helpful for 2 reasons:

- It surfaces papers I have missed. Sometimes genuinely important ones. The diff between my curated list and what the AI returns tells me where I have been blind.

- It shows me angles I had not considered. A literature review built by AI on the same topic groups papers differently than I would. Sometimes that grouping reveals a sub-theme worth a slide. Sometimes it surfaces a counter-argument I had glossed over.

Both feed back into the talk before I rehearse. Better to find the missing paper now than during Q&A from the one person in the room who wrote it.

The value increasingly lies in what the AI is connected to, not just the model itself. A chatbot is a chatbot. A literature review tool that searches actual indexed papers, structures them, and feeds them into your prep is something else entirely.

For talk prep, that is the difference between brainstorming and shifting what makes the cut.

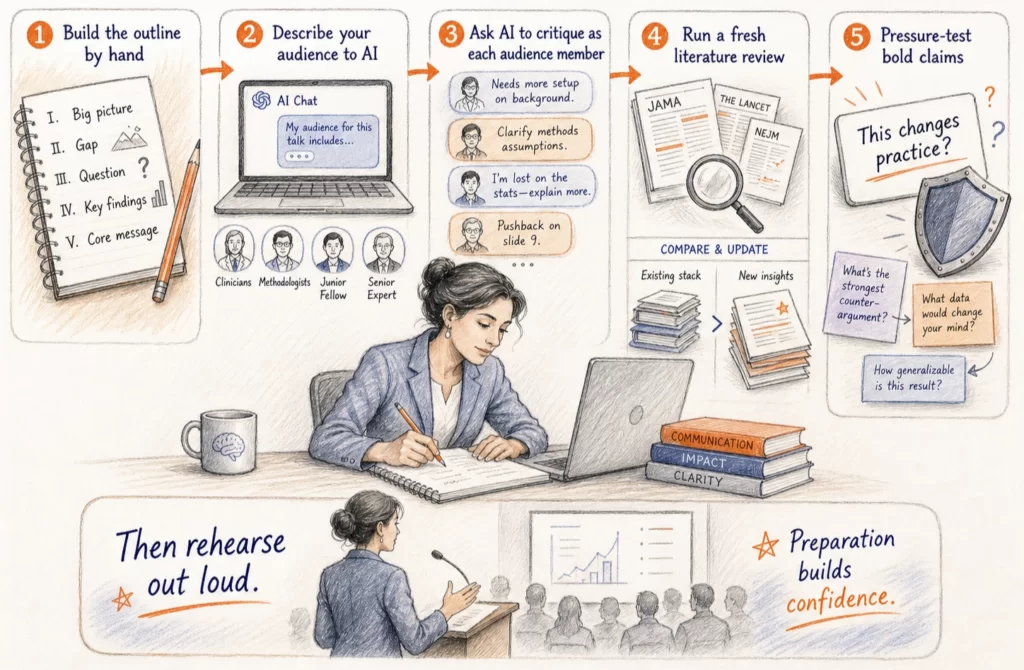

4. The five-step prep playbook

You do not need a society lecture audience to use any of this. The same workflow runs for grand rounds, journal club, a thesis defense, or a job talk.

- Build the outline by hand. Frame the big picture. State the gap. State the question. List your two or three key findings. Land on the core message in one sentence. If you cannot, you do not have a talk yet.

- Describe your audience to AI in detail. Single-disease focus or mixed? Junior or senior? Methodologists or clinicians? The more specific you get, the more useful the simulation.

- Ask AI to critique the outline as if it were each person in that audience. Skip “is this good?” Ask “where does the senior methodologist push back on slide 9?” Ask “what does the junior fellow miss without more setup?”

- Run a fresh literature review on the topic. Compare it against your own collection. Note the gaps in both directions. Add what matters. Drop what does not.

- Pressure-test the bold claims. If you are going to say “this changes practice,” ask AI: what is the strongest argument against this claim? What evidence would I need to defend it from a hostile reviewer?

The goal is not to soften every sharp point. The goal is to know exactly what you are walking into.

Then rehearse out loud, ideally in the same room. Stein is right about this. Most of the great speakers I know rehearse far more than people assume.

5. What AI cannot do for your talk

It cannot judge what is clinically important to the patient sitting in your clinic on Monday.

It cannot tell you which slide will land in your specific institution, with its specific interests, history, and politics.

It cannot replace the moment you stand at the podium and read the room.

What it can do is take the version of your talk you would have given anyway, and make it tighter, harder to rebut, and more useful to the people sitting in front of you.

Which is the whole point.

If this was useful, send it to someone who has a talk on the calendar this month. And if your prep workflow looks different from mine, I want to hear about it. I am still tweaking my own.

AI is progressing fast and I’m planning to include a section on Top Papers on AI in research this week. For this week, I included it here but I am debating about sending a separate newsletter mid-week on Tuesdays. Let me know if you prefer this over me including it on the Friday newsletter. Please just reply to this email.

Top Papers on AI in Research this week:

- AI Is Reshaping Biologic Drug Discovery – The Medicine Maker April 2026 review details how protein language models and deep learning now let researchers design novel antibodies, peptides, and mRNA candidates from scratch. Early AI-designed biologics are now entering clinical evaluation. The shift from theory to practice is underway.

- AI Scientist-v2 Achieves Peer Review Acceptance – Sakana AI’s system used agentic tree search to generate a research paper that passed peer review at an ICLR workshop. It autonomously forms hypotheses, runs experiments, analyzes data, and writes manuscripts. No human-authored code templates were required.

- AI Discovers New Physics in the Fourth State of Matter – Emory University researchers combined a custom neural network with 3D particle tracking in dusty plasma. Their model uncovered hidden non-reciprocal forces that classical theory had long missed. It captured these interactions with over 99% accuracy.

- Eli Lilly and Insilico Sign $2.75B AI Drug Partnership – Insilico Medicine’s generative AI platform, which spans target identification through molecule design, landed a landmark collaboration. One compound went from target identification to Phase I in under 30 months. That is roughly half the time traditional pharma typically needs.

- Stanford AI Index 2026 – Stanford HAI’s annual report found several AI models now match or exceed human performance on PhD-level science questions. LLM usage in published academic manuscripts has risen sharply. Computer science papers showed the steepest increase.

Top Papers on AI in Education this week:

- AI Feedback Sharpens Critical Thinking in Design Students – A Frontiers study ran a three-week experiment with 70 undergraduates split into AI feedback and control groups. Students who received structured AI process feedback showed stronger gains in design thinking, creative thinking, and reflective analysis. The scaffolding worked because it allowed multiple rounds of iteration in a short timeframe.

- Effective Personalized AI Tutors via LLM-Guided Reinforcement Learning – Researchers trained LLM tutors with reinforcement learning to proactively guide students rather than simply respond to them. The approach significantly raised the probability of correct answers on the very next student turn. Most existing AI tutors, the paper argues, are not optimized to maximize learning over a full dialogue.

- PELICAN: Cognitive Diagnosis Meets Adaptive Tutoring – PELICAN runs in two stages: a diagnostic pipeline first maps exactly what a student understands, then a dynamic module adapts instruction in real time. Head-to-head tests showed a 22.4% gain in task completion and an 18.7% boost in critical thinking stimulation compared to baseline models.

- Generative AI’s Impact on Authorship, Pedagogy, and Academic Integrity – This review synthesized 54 peer-reviewed studies and 6 international policy documents. It identifies four pressure points reshaping higher education: authorship attribution, pedagogical transformation, academic integrity, and the broader ethical ramifications of AI at scale.

- LLM Scaffolding, AI Literacy, and Academic Performance – A 2026 Springer study found that AI scaffolding improved student outcomes, but only for students with higher AI literacy. Prior knowledge also moderated the effect. The finding suggests these tools work best as complements to existing understanding, not substitutes.

📌 And just a reminder, I’ll be giving Academic Writing with AI: Live Masterclass tomorrow (May 2, 2026, 10am CDT). If you haven’t registered yet, you can still register at this link here FREE: https://risingresearcheracademy.easywebinar.live/event-registration-7

If this weekend doesn’t work, I will be giving the same talk on May 9, 2026, 10am CDT – you can register here FREE: https://risingresearcheracademy.easywebinar.live/event-registration-9